PyTorch and TensorFlow are two popular software frameworks used for building machine learning and deep learning models.

PyTorch vs. TensorFlow

- PyTorch is a relatively young deep learning framework that is more Python-friendly and ideal for research, prototyping and dynamic projects.

- TensorFlow is a mature deep learning framework with strong visualization capabilities and several options for high-level model development. It has production-ready deployment options and support for mobile platforms.

Deep learning seeks to develop human-like computers to solve real-world problems, all by using special brain-like architectures called artificial neural networks. To help develop these architectures, tech giants like Meta and Google have released various frameworks for the Python deep learning environment, making it easier to learn, build and train diversified neural networks.

In this article, we’ll compare two widely used deep learning frameworks: PyTorch and TensorFlow.

What Is PyTorch?

PyTorch is an open-source deep learning framework that supports Python, C++ and Java. It is commonly used to develop machine learning models for computer vision, natural language processing and other deep learning tasks. PyTorch was created by the team at Meta AI and open sourced on GitHub in 2017.

PyTorch has gained popularity for its simplicity, ease of use, dynamic computational graph and efficient memory usage, which we’ll discuss in more detail later.

What Is TensorFlow?

TensorFlow is an open-source deep learning framework for Python, C++, Java and JavaScript. It can be used to build machine learning models for a range of applications, including image recognition, natural language processing and task automation. TensorFlow was created by developers at Google and released in 2015.

TensorFlow is widely applied by companies to develop and automate new systems. It draws its reputation from its distributed training support, scalable production and deployment options, and support for various devices like Android.

Pros and Cons of PyTorch vs. TensorFlow

PyTorch Pros

- Python-like coding.

- Uses dynamic computational graphs.

- Easy and quick editing.

- Good documentation and community support.

- Open source.

- Plenty of projects out there using PyTorch.

PyTorch Cons

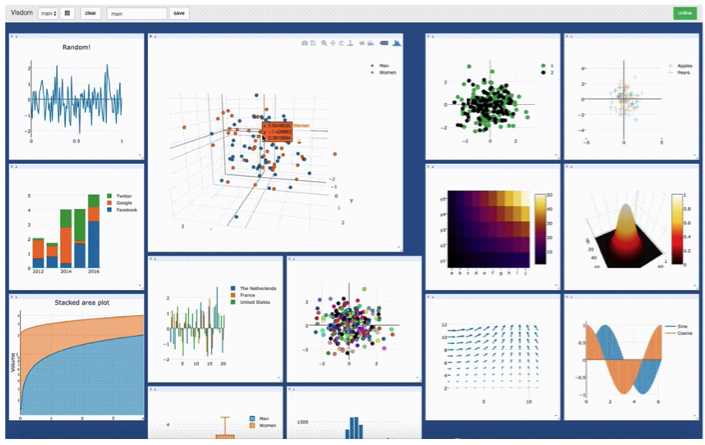

- Third-party needed for data visualization.

- API server needed for production.

TensorFlow Pros

- Simple built-in high-level API.

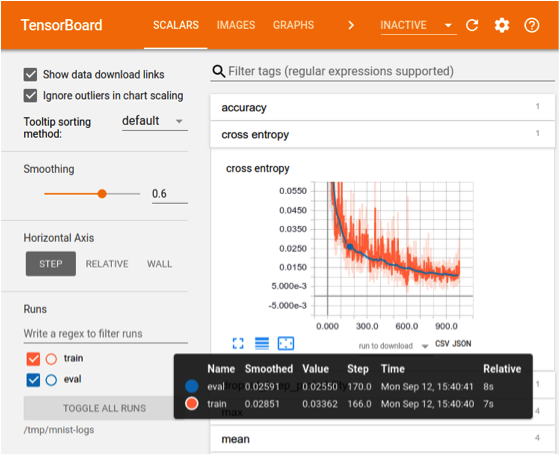

- Visualizing training with TensorBoard library.

- Production-ready thanks to TensorFlow Serving framework.

- Easy mobile support.

- Open source.

- Good documentation and community support.

TensorFlow Cons

- Steep learning curve.

- Uses static computational graphs.

- Debugging method.

- Hard to make quick changes.

Difference Between PyTorch vs. TensorFlow

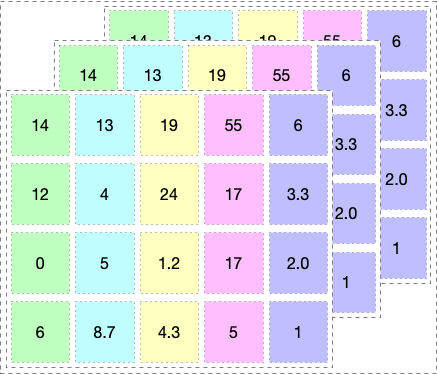

The key difference between PyTorch and TensorFlow is the way they execute code. Both frameworks work on the fundamental data type tensor. You can imagine a tensor as a multidimensional array shown in the below picture:

1. Mechanism: Dynamic vs. Static Graph Definition

TensorFlow is a framework composed of two core building blocks:

- A library for defining computational graphs and runtime for executing such graphs on a variety of different hardware.

- A computational graph which has many advantages (but more on that in just a moment).

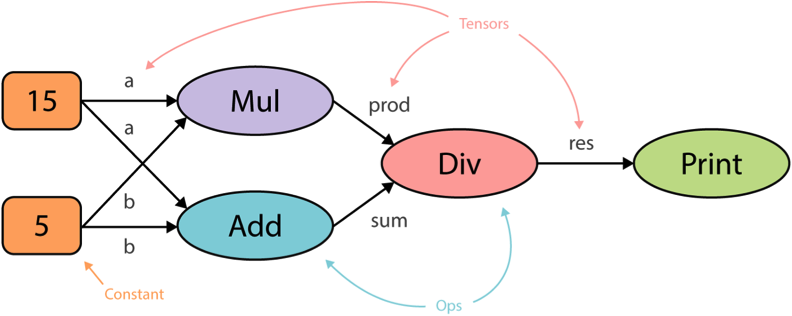

A computational graph is an abstract way of describing computations as a directed graph. A graph is a data structure consisting of nodes (vertices) and edges. It’s a set of vertices connected pairwise by directed edges.

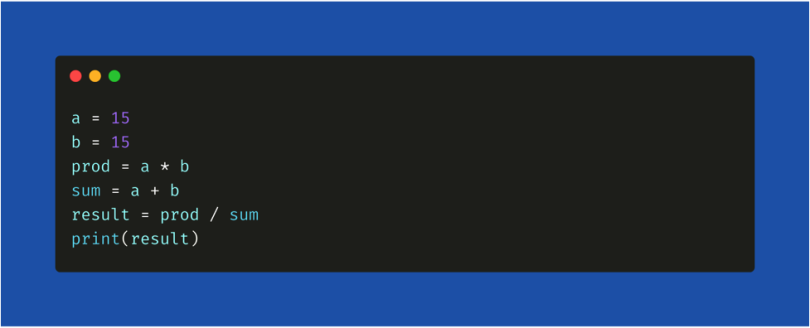

When you run code in TensorFlow, the computation graphs are defined statically. All communication with the outer world is performed via tf.Session object and tf.Placeholder, which are tensors that will be substituted by external data at runtime. For example, consider the following code snippet.

This is how a computational graph is generated in a static way before the code is run in TensorFlow. The core advantage of having a computational graph is allowing parallelism or dependency driving scheduling which makes training faster and more efficient.

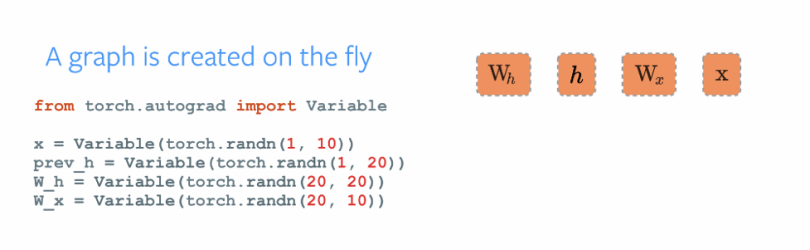

Similar to TensorFlow, PyTorch has two core building blocks:

- Imperative and dynamic building of computational graphs.

- Autograds: Performs automatic differentiation of the dynamic graphs.

As you can see in the animation below, the graphs change and execute nodes as you go with no special session interfaces or placeholders. Overall, the framework is more tightly integrated with the Python language and feels more native most of the time. Hence, PyTorch is more of a Pythonic framework and TensorFlow feels like a completely new language.

These differ a lot in the software fields based on the framework you use. TensorFlow provides a way of implementing dynamic graphs using a library called TensorFlow Fold, but PyTorch has it inbuilt.

2. Distributed Training

One main feature that distinguishes PyTorch from TensorFlow is data parallelism. PyTorch optimizes performance by taking advantage of native support for asynchronous execution from Python. In TensorFlow, you’ll have to manually code and fine tune every operation to be run on a specific device to allow distributed training. However, you can replicate everything in TensorFlow from PyTorch but you need to put in more effort. Below is the code snippet explaining how simple it is to implement distributed training for a model in PyTorch.

3. Visualization

When it comes to visualization of the training process, TensorFlow takes the lead. Data visualization helps the developer track the training process and debug in a more convenient way. TensorFlow’s visualization library is called TensorBoard. PyTorch developers use Visdom, however, the features provided by Visdom are very minimalistic and limited, so TensorBoard scores a point in visualizing the training process.

Features of TensorBoard

- Tracking and visualizing metrics such as loss and accuracy.

- Visualizing the computational graph (ops and layers).

- Viewing histograms of weights, biases or other tensors as they change over time.

- Displaying images, text and audio data.

- Profiling TensorFlow programs.

Features of Visdom

- Handling callbacks.

- Plotting graphs and details.

- Managing environments.

4. Production Deployment

When it comes to deploying trained models to production, TensorFlow is the clear winner. We can directly deploy models in TensorFlow using TensorFlow serving which is a framework that uses REST Client API.

In PyTorch, these production deployments became easier to handle than in its latest 1.0 stable version, but it doesn’t provide any framework to deploy models directly on to the web. You’ll have to use either Flask or Django as the backend server. So, TensorFlow serving may be a better option if performance is a concern.

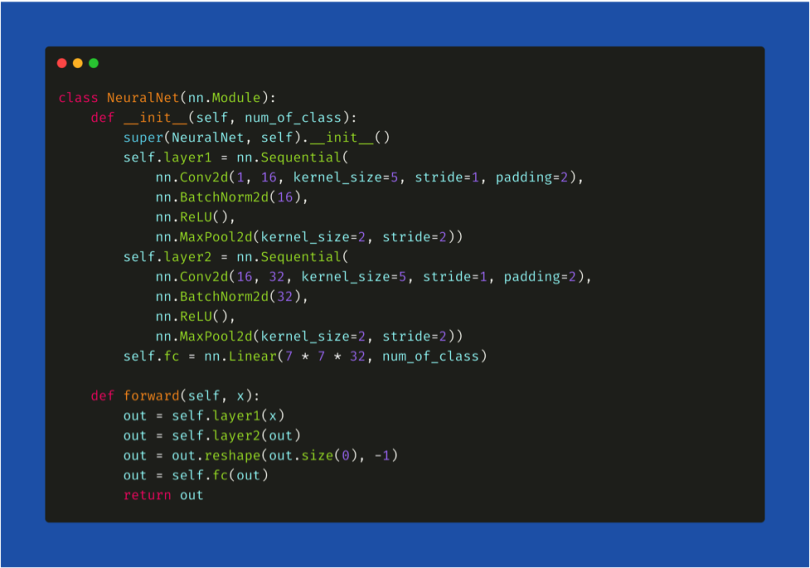

5. Defining a Neural Network in PyTorch and TensorFlow

Let’s compare how we declare the neural network in PyTorch and TensorFlow.

In PyTorch, your neural network will be a class and using torch.nn package we import the necessary layers that are needed to build your architecture. All the layers are first declared in the __init__() method, and then in the forward() method we define how input x is traversed to all the layers in the network. Lastly, we declare a variable model and assign it to the defined architecture (model = NeuralNet()).

Keras, a neural network framework which uses TensorFlow as the backend, is merged into TF Repository, meaning the syntax of declaring layers in TensorFlow is similar to the syntax of Keras. First, we declare the variable and assign it to the type of architecture we will be declaring, in this case a “Sequential()” architecture. Next, we directly add layers in a sequential manner using the model.add() method. The type of layer can be imported from tf.layers as shown in the code snippet below.

What Can Be Built With PyTorch vs. TensorFlow?

Initially, neural networks were used to solve simple classification problems like handwritten digit recognition or identifying a car’s registration number using cameras. But thanks to the latest frameworks and NVIDIA’s high computational graphics processing units (GPU’s), we can train neural networks on terabytes of data and solve far more complex problems. A few notable achievements include reaching state of the art performance on the IMAGENET dataset using convolutional neural networks implemented in both TensorFlow and PyTorch. The trained model can be used in different applications, such as object detection, image semantic segmentation and more.

Although the architecture of a neural network can be implemented on any of these frameworks, the result will not be the same. The training process has a lot of parameters that are framework dependent. For example, if you are training a dataset on PyTorch you can enhance the training process using GPU’s as they run on CUDA (a C++ backend). In TensorFlow you can access GPU’s but it uses its own inbuilt GPU acceleration, so the time to train these models will always vary based on the framework you choose.

Top PyTorch Projects

- CheXNet: Radiologist-level pneumonia detection on chest X-rays with deep learning.

- PYRO: Pyro is a universal probabilistic programming language (PPL) written in Python and supported by PyTorch on the backend.

Top TensorFlow Projects

- Magenta: An open-source research project exploring the role of machine learning as a tool in the creative process.

- Sonnet: Sonnet is a library built on top of TensorFlow for building complex neural networks.

- Ludwig: Ludwig is a toolbox to train and test deep learning models without the need to write code.

These are a few frameworks and projects that are built on top of PyTorch and TensorFlow. You can find more on Github and the official websites of PyTorch and TF.

PyTorch vs. TensorFlow Installation and Updates

PyTorch and TensorFlow are continuously releasing updates and new features that make the training process more efficient, smooth and powerful.

To install the latest version of these frameworks on your machine, you can either build from source or install from pip.

Installation instructions can be found here for PyTorch, and here for TensorFlow.

PyTorch Installation

Linux

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cpu

macOS

pip3 install torch torchvision torchaudio

Windows

pip3 install torch torchvision torchaudio

TensorFlow Installation

Linux

python3 -m pip install tensorflow[and-cuda]

To verify installation: python3 -c "import tensorflow as tf' print(tf.config.list_physical_devices('GPU'))"

macOS

python3 -m pip install tensorflow

To verify installation: python3 -c "import tensorflow as tf; print(tf.reduce_sum(tf.random.normal([1000, 1000]))

Windows Native

conda install -c conda-forge cudatoolkit=11.2 cudnn=8.1.0

#Anything above 2.10 is not supported on the GPU on Windows Native

python -m pip install "tensorflow<2.11"

To verify installation: python -c "import tensorflow as tf; print(tf.config.list_physical_devices('GPU'))"

Windows WSL 2

python3 -m pip install tensorflow[and-cuda]

To verify installation: python3 -c "import tensorflow as tf; print(tf.config.list_physical_devices('GPU'))"

PyTorch vs. TensorFlow: My Recommendation

TensorFlow is a very powerful and mature deep learning library with strong visualization capabilities and several options to use for high-level model development. It has production-ready deployment options and support for mobile platforms. PyTorch, on the other hand, is still a relatively young framework with stronger community movement and it’s more Python-friendly.

What I would recommend is if you want to make things faster and build AI-related products, TensorFlow is a good choice. PyTorch is mostly recommended for research-oriented developers as it supports fast and dynamic training.

Frequently Asked Questions

Is PyTorch better than TensorFlow?

Both PyTorch and TensorFlow are helpful for developing deep learning models and training neural networks. Each have their own advantages depending on the machine learning project being worked on.

PyTorch is ideal for research and small-scale projects prioritizing flexibility, experimentation and quick editing capabilities for models. TensorFlow is ideal for large-scale projects and production environments that require high-performance and scalable models.

Is PyTorch worth learning?

PyTorch is worth learning for those looking to experiment with deep learning models and are already familiar with Python syntax. It is a widely-used framework in deep learning research and academia environments.

Is TensorFlow worth learning?

TensorFlow is worth learning for those interested in full-production machine learning systems. It is a widely-used framework among companies to build and deploy production-ready models.

Does OpenAI use PyTorch or TensorFlow?

OpenAI uses PyTorch to standardize its deep learning framework as of 2020.

Is TensorFlow better than PyTorch?

TensorFlow can be better suited when needing to deploy large-scale, production-grade machine learning systems. It is also effective for customizing neural network features.

Does ChatGPT use PyTorch or TensorFlow?

PyTorch is likely used by ChatGPT as its primary machine learning framework, as OpenAI stated its deep learning framework is standardized on PyTorch.

Is TensorFlow difficult to learn?

Yes, TensorFlow is often considered difficult to learn due to its structure and complexity. Having programming and machine learning knowledge may be required to fully understand how to use the TensorFlow framework.