Unit tests are automated tests. In other words, unit testing is performed by software (such as a unit testing framework or unit testing tool) and not manually by a developer. This means unit tests allow automated, repeatable, continuous testing.

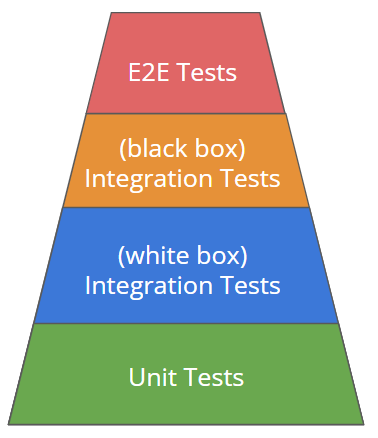

Unit tests are positioned on the first (lowest) test level of the test pyramid because unit tests are the fastest and least expensive tests a developer can write. Put another way, unit tests are the first step in testing an application. Once unit tests have been passed, all subsequent tests like integration tests and E2E tests should be performed. These are more computationally expensive, but they test the application in greater detail.

Why Do Unit Testing?

The advantage of automation testing (and unit testing specifically) is that these types of testing make it possible to test quickly and thus more frequently, whereas manual testing requires much more human intervention. Unit testing allows developers to detect errors in a timely manner, especially regression errors, which result from changes to the program code. As a result, unit tests are ideal to help identify local errors in the code rapidly during the development phase for new features and code adaptations.

In a broader sense, there are plenty of good reasons to test software properly. First and foremost is the sobering fact that software always contains errors, which can lead to software misbehavior. This misbehavior, in turn, can cost money on the one hand or, depending on the location or purpose, human lives. In 2018 Uber’s self-driving car caused an accident that killed a pedestrian. More precise testing of the corresponding software in advance could have prevented the malfunction of this autonomous vehicle.

Tests are living documentation. They provide information about the quality of the software and thus build our confidence in it. In addition, once the expected initial difficulties have been overcome, testing can noticeably accelerate software development.

Unit Testing vs. Integration Testing

Unit tests focus on isolating the smallest parts — known as units — of an application or software for testing. Units may include a specific piece of code, a method or a function. By testing these components individually, teams can verify whether each unit works well on its own before testing the entire application as a whole.

Integration testing then combines these units and tests them together as a single unit. Because different developers may work on different components, these parts may not operate well together. Serving as a follow-up to unit testing, integration testing makes sure that various units complement each other and function smoothly as a single unit.

While they fulfill unique purposes, unit testing and integration testing can be completed with the same testing frameworks, including the popular options pytest and unittest. The main difference between pytest and unittest is that pytest may involve less coding, saving time for developers. Otherwise, both are effective when conducting unit and integration tests.

Unit Testing Benefits

With unit tests, a software developer can easily uncover changes in the code’s behavior. Unit tests also reveal unintended effects in newly added functions when features are introduced to an existing application.

Unit tests bring many advantages to a software development project:

- Developers receive fast feedback on code quality through regular execution of unit tests.

- Unit tests force developers to work on the code instead of just writing it. In other words, the developer must constantly rethink their own methodology and optimize the written code after receiving feedback from the unit test.

- Unit tests enable high test coverage.

- It’s possible to perform automated, high-quality testing of the entire software unit by unit with speed and accuracy.

We use unit tests to detect errors and problems in the code at an early stage of development. If the test discovers an error, it must necessarily be in the small source code unit that has just been tested.

Unit Testing Tools

There are many ways to implement unit tests. The most popular way is using JUnit (Java Unit), a widely used framework that became the go-to solution for automated unit testing of application methods and classes written in Java. There are a number of similar frameworks and extensions that have adopted the concept of JUnit and enable unit tests in other programming languages: NUnit (.Net), CppUnit (C++), DbUnit (databases), PHPUnit (PHP), HTTPUnit (web development) and more. Such frameworks for unit testing are commonly referred to as “xUnits.”

Unit Testing Techniques

When performing unit testing, there are several approaches developers can take, depending on the purpose and conditions of the test.

Black Box Testing

Black box testing is when developers test an application without being able to see any of the code or internal infrastructure of that application. This allows teams to take on the perspective of users and experience what it’s like interacting with a product. Black box testing is ideal for assessing UX design factors like usability, accessibility and functionality.

White Box Testing

White box testing refers to when developers test an application while having full knowledge of the application’s internal infrastructure, code and documentation. This approach enables teams to focus more on testing internal processes, like making sure lines of code work properly, locating bugs or security flaws and verifying the functionality of units.

Gray Box Testing

Gray box testing is a combination of black box and white box testing, where developers have partial knowledge of an application’s internal infrastructure. For example, developers may know some of the code or they may just have access to documentation. Developers can then evaluate the functionality and security of a product without going as in-depth as black box testing.

How to Write a Unit Test

The following example shows a simple test used to verify that the comparison operation for a Euro class is implemented correctly.

public class EuroTest {

class Euro {

private Double euroAmount;

public Euro(double euroAmount) {

this.euroAmount = Double.valueOf(euroAmount);

}

public Double getEuroAmount() {

return this.euroAmount;

}

public int compareTo(Euro euro) {

if (this == euro) {

return 0;

}

if (euro != null) {

return this.euroAmount.compareTo(euro.getEuroAmount());

}

return 0;

}

}

@Test

public void compareEuroClass() {

Euro oneEuro = new Euro(1);

Euro twoEuro = new Euro(2);

// Test that oneEuro equals to itself

assertEquals(0, oneEuro.compareTo(oneEuro));

//Test that oneEuro is smaller than twoEuro

assertTrue(oneEuro.compareTo(twoEuro) < 0);

//Test that twoEuro is larger than oneEuro

assertTrue(twoEuro.compareTo(oneEuro) > 0);

}

}

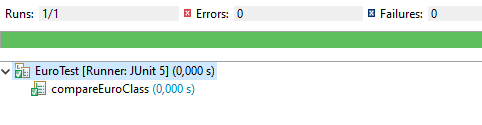

If the Euro object behaves as expected, JUnit confirms this with a green bar:

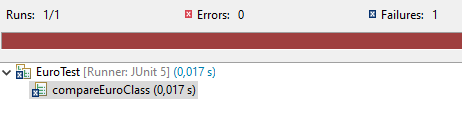

JUnit acknowledges behavior that deviates from the test with a red bar:

What Makes a Good Unit Test?

An effective unit test must fulfill certain features, which are:

- The individual tests must be independent of each other. The order of execution does not matter and has no influence on the entire test result. Also, if one test fails, the failed test does not have an influence on other unit tests.

- Each unit test focuses on exactly one property of the code.

- Unit tests must be completely automated. Usually, the tests are executed frequently to check for regression errors.

- The tests must be easy to understand to the developers and collaborators who are working on the same code.

- The quality of the unit tests must be as high as the quality of the production code.

Unit Testing Best Practices

To further guarantee the success of a unit test, it helps to keep these best practices in mind:

- Embrace test-driven development: Write the test before writing any code. This saves time on having to rewrite code and tailor it to the needs of unit tests later on.

- Keep the test simple: Avoid adding unnecessary code, logic and other elements. This makes the test less confusing and speeds up the testing process.

- Organize testing elements: Keeping unit tests readable is also essential to making sure developers are on the same page. Follow a method like the arrange, act and assert pattern, so tests are easier to navigate.

- Ensure consistency: Unit tests must deliver the same results if no variables are changed. Ensuring a test doesn’t depend on too many variables or external factors can prevent a test from being easily manipulated.

- Incorporate diverse data: Unit tests must take into account the various scenarios a product or application may face. Be sure to include diverse data, so teams can test for invalid inputs, edge cases and other unique circumstances.

- Develop a naming system: A unit test title can cover things like the purpose, scenario and hypothesis of a test. Make the name specific, so team members can skim a library of tests and understand the gist of each test without having to read the code.

Frequently Asked Questions

What is unit testing?

Unit testing is the process of testing the smallest parts of an application, such as functions or methods, to ensure they work correctly in isolation.

Why is unit testing important?

It helps detect errors early, supports faster development, prevents regression issues and builds confidence in code quality.

How is unit testing different from integration testing?

Unit testing checks individual components in isolation, while integration testing verifies that combined units function together properly.

What tools are used for unit testing?

Common tools include JUnit (Java), NUnit (.Net), CppUnit (C++), PHPUnit (PHP) and pytest or unittest for Python.