Last month, Anthropic quietly admitted they had to rewrite their technical interview questions. The reason? Candidates were using Claude — Anthropic’s own AI tool — to “cheat” on the coding tests.

The industry reaction was predictable. Hiring managers panicked. They called for stricter proctoring, disabled browser tabs and a return to whiteboard interviews administered in Faraday cages. They treated the news as an integrity crisis.

They’re wrong. This is not an integrity crisis. It’s a measurement crisis.

I speak from experience. Over the last 15 years, I have reviewed thousands of resumes and sat in hundreds of interviews. For most of my career, the syntax interview, which consists of writing a sorting algorithm from scratch or reversing a linked list on a whiteboard, was the gold standard for software roles. It was a useful proxy for competence because code generation was scarce. When I saw a candidate write syntactically perfect code under pressure, I knew they possessed deep memory and logic.

But that era is over. Generative AI has driven the marginal cost of syntax to near zero. Code generation is now a commodity. If your interview tests for commodity skills, you are optimizing for commodity talent.

To build teams that can actually make use of AI, we need to stop testing for generation and start testing for verification.

What Is an Audit Interview? How Is It Different From Coding Interviews?

An audit interview is a technical hiring method that replaces traditional blank slate coding tests with a verification-based assessment.

Instead of writing syntax from scratch, a candidate is given functioning but flawed AI-generated code and tasked with identifying hidden risks, such as memory leaks, security vulnerabilities or scalability issues. The goal is to evaluate a candidate’s judgment, architectural thinking and ability to interrogate AI output rather than their ability to memorize code patterns.

The Economics of Abundance

To understand why the interview must change, we have to look at the economics of the role. In any labor market, you pay for scarcity.

In 2015, the ability to write a React component from memory was scarce. The documentation was fragmented, the tooling was complex and the syntax was unforgiving. We paid developers a premium to hold that complexity in their heads.

In 2026, the ability to write a React component is abundant. An LLM can generate ten variations of it in seconds. When a skill transitions from scarce to abundant, its market value collapses. We saw this with arithmetic in the 1980s. Before the spreadsheet, being able to mentally calculate a ledger was a valuable skill for an accountant. After Excel, mental math became a parlor trick. The value shifted to financial modeling and risk analysis.

We are at the “Excel moment” for software engineering. The value is no longer in the typing. It’s in the thinking. Yet our hiring loops are still testing for the equivalent of mental math.

When we ban AI from the interview process, we’re artificially enforcing a constraint that doesn’t exist in the real world. We’re testing how well a candidate performs in a vacuum, not how they will perform in a modern IDE. This artificiality creates a dangerous misalignment between the test and the job.

The Collapse of the Proxy

The technical interview was never really about the code. It was a proxy for gauging a candidate’s problem-solving skills. We assumed that if you could navigate the syntax of a complex function, you understood the logic behind it.

AI broke that link. Today, a junior developer with an LLM can generate senior-level syntax in seconds without understanding the underlying system design. This ability breaks the signal-to-noise ratio of the hiring process. It also creates two dangerous false signals:

The False Positive

The candidate who memorized LeetCode patterns passes the test but cannot reason through a complex, ambiguous system failure. They are syntax rich but judgment poor.

The False Negative

The senior architect who relies on documentation and tooling to handle syntax so they can focus on system constraints fails the test because they forgot the specific parameter order of a library function.

In a feature factory, we hire for lines of code produced. In an efficient capital environment, however, we must hire for judgment.

The Subprime Code Crisis

There is a secondary risk to hiring for generation: the explosion of “subprime code.”

Because AI makes generating code free, we’re seeing a massive inflation in the volume of code being pushed to repositories. But AI-generated code often carries hidden debt. It might choose an inefficient library, introduce a subtle security vulnerability or create a dependency structure that creates a maintenance nightmare six months down the road.

If you hire engineers who are great at prompting AI but terrible at auditing it, you’re building a codebase that is technically insolvent. You’re accumulating maintenance liabilities faster than you’re creating asset value.

This is why the skill set for a senior engineer is shifting. Reading, debugging and verifying code is harder than writing it. When an AI tool generates a 500-line module, the human in the loop must act as the auditor. They need to spot the expensive n+1 query that the AI hallucinated into existence.

This kind of work requires a completely different cognitive approach. It requires architectural thinking, not syntactical recall.

Enter the Audit Interview

So how do we fix the hiring loop? We don’t ban the tools. We embrace them.

I recommend replacing the whiteboard syntax session with what I call the audit interview.

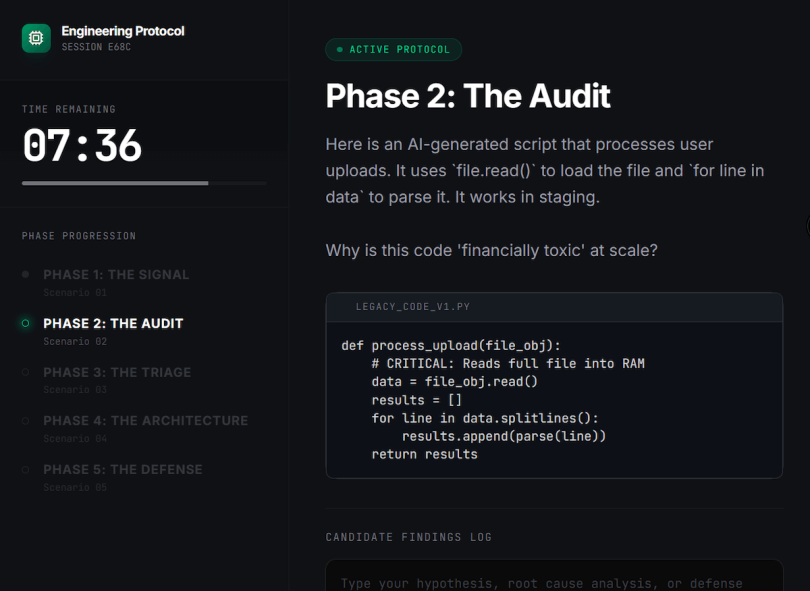

In an audit interview, you don’t ask the candidate to write code from a blank slate. Instead, you hand them a block of functioning but flawed code generated by an AI tool. You tell them: “This code works, but it will break in production at scale. Tell me why.”

For example, provide a Python script that processes a data set. The logic is correct, but it loads the entire file into memory rather than streaming it.

- The junior engineer will say, “It looks good because it runs.”

- The senior engineer will say, “This is an O(n) memory leak waiting to happen. If the file exceeds 4GB, the container will crash.”

The Verification Audit. In this live simulation, the candidate isn’t asked to write code; they’re handed AI-generated code that contains a hidden memory bomb (loading a full file into RAM). The test is whether they catch the liability that the AI created.

This changes the dynamic instantly.

- It tests code review skills. Can they spot the edge case the AI missed?

- It tests system thinking. Do they ask about the scale this code needs to support?

- It tests economic judgment. Do they notice that the AI chose an expensive third-party API when a local library would have been free?

You can even let them use ChatGPT or Claude during the interview. Watch how they prompt it. Do they treat the AI as an oracle or an intern? A junior engineer accepts the AI’s output as truth. A senior engineer interrogates it, challenges its assumptions and forces it to refine its output based on constraints.

Mentorship in the Age of Auto-Complete

A common objection to this shift is the so-called junior gap. If juniors don’t write code from scratch, how will they ever develop the intuition to audit it?

The answer isn’t to force them to write syntax on a whiteboard. The answer is to invert the apprenticeship.

In the past, a senior engineer reviewed the junior’s code. In the AI era, the junior should be reviewing the AI’s code, with the senior engineer reviewing the review. The skill we are cultivating is the ability to spot patterns of failure, not the ability to type characters.

Hire Architects, Not Bricklayers

The 10x Engineer of the last decade was the one who could type the fastest. The 10x Engineer of the next decade is the one who can direct the fleet of AI agents to build the right thing.

We’re moving from an era of construction to an era of curation. The companies that cling to the syntax interview will fill their seats with people who are good at memorizing puzzles. The companies that shift to the verification interview will fill their seats with architects who understand risk, cost and trade-offs.

Anthropic didn’t prove that candidates are cheaters. They proved that the test is broken. Stop testing for the robot’s job. Start testing for the human’s.