People want to use neural networks everywhere, but are they always the right choice? We’ll take a look at some of the disadvantages of using them.

What Is a Neural Network?

A neural network is a form of AI-based learning designed to help computers analyze data similarly to humans. Each neural network is made up of layers of nodes, which pass data between each other. Through training data and experience, neural networks give machines the ability to learn from mistakes and improve their performance over time. As a result, neural networks are ideal for handling more complex data-related tasks.

Disadvantages of Neural Networks

Neural networks have spurred innovation within the field of artificial intelligence, but there are several reasons to be cautious about applying this learning method.

1. Neural Networks Are a ‘Black Box’

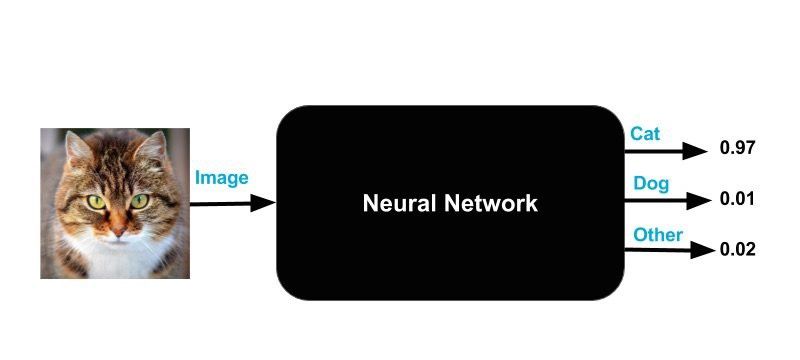

Arguably, the best-known disadvantage of neural networks is their “black box” nature. Simply put, you don’t know how or why your NN came up with a certain output. For example, when you put an image of a cat into a neural network and it predicts it to be a car, it is very hard to understand what caused it to arrive at this prediction. When you have features that are human-interpretable, it is much easier to understand the cause of the mistake. By comparison, algorithms like decision trees are very interpretable. This is important because in some domains, interpretability is critical.

This is why a lot of banks don’t use neural networks to predict whether a person is creditworthy — they need to explain to their customers why they didn’t get the loan, otherwise the person may feel unfairly treated. The same holds true for sites like Quora. If a machine learning algorithm decided to delete a user’s account, the user would be owed an explanation as to why. I doubt they’ll be satisfied with “that’s what the computer said.”

Other scenarios would be important business decisions. Can you imagine the CEO of a big company making a decision about millions of dollars without understanding why it should be done? Just because the “computer” says he needs to do so?

2. Neural Networks May Take a Long Time to Develop

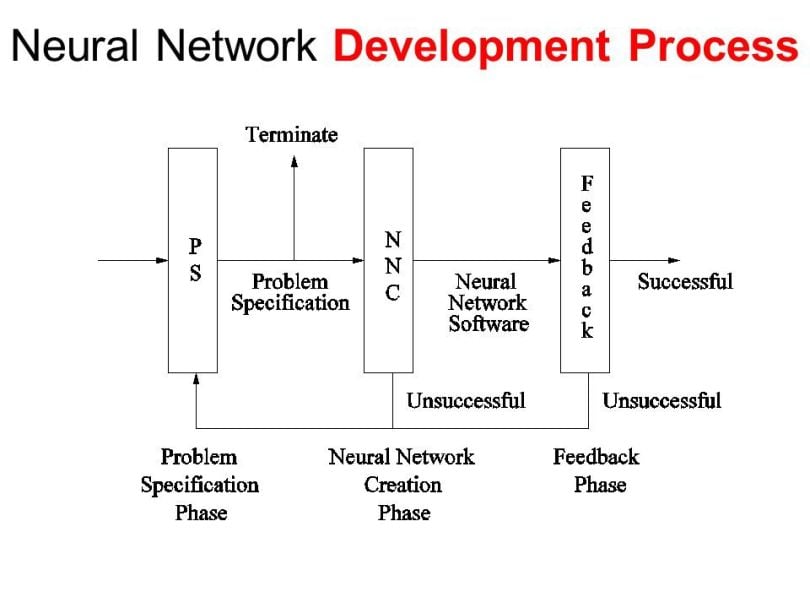

Although there are libraries like Keras that make the development of neural networks fairly simple, sometimes you need more control over the details of the algorithm, like when you’re trying to solve a difficult problem with machine learning that no one has ever done before.

In that case, you might use Tensorflow, which provides more opportunities, but it is also more complicated and the development takes much longer (depending on what you want to build). Then a practical question arises for any company: Is it really worth it for expensive engineers to spend weeks developing something that may be solved much faster with a simpler algorithm?

3. Neural Networks Require Lots of Data

Neural networks usually require much more data than traditional machine learning algorithms, as in at least thousands if not millions of labeled samples. This isn’t an easy problem to deal with and many machine learning problems can be solved well with less data if you use other algorithms.

Although there are some cases where neural networks do well with little data, most of the time they don’t. In this case, a simple algorithm like naive Bayes, which deals much better with little data, would be the appropriate choice.

4. Neural Networks Are Computationally Expensive

Usually, neural networks are also more computationally expensive than traditional algorithms. State-of-the-art deep learning algorithms, which realize successful training of really deep neural networks, can take several weeks to train completely from scratch. By contrast, most traditional machine learning algorithms take much less time to train, ranging from a few minutes to a few hours or days.

The amount of computational power needed for a neural network depends heavily on the size of your data, but also on the depth and complexity of your network. For example, a neural network with one layer and 50 neurons will be much faster than a random forest with 1,000 trees. By comparison, a neural network with 50 layers will be much slower than a random forest with only 10 trees.

Advantages of Neural Networks

Despite their downsides, neural networks do come with some benefits that make them a more attractive option compared to traditional machine learning algorithms.

1. Neural Networks Can Handle Unorganized Data

Neural networks can process large volumes of raw data, enabling them to tackle advanced data challenges. In fact, neural networks get better as they’re fed more data. By comparison, traditional machine learning algorithms reach a level where more data doesn’t improve their performance.

2. Neural Networks Can Improve Accuracy

Neural networks practice continuous learning, allowing them to gradually improve their performance after each iteration. This process gives machines the ability to build on past experiences and raise their accuracy over a given period.

3. Neural Networks Can Increase Flexibility

Neural networks are able to adapt to different problems and environments, unlike more rigid machine learning algorithms. This makes it possible to apply neural networks to a wide range of areas, including natural language processing and image recognition.

4. Neural Networks Can Lead to Faster Workflows

Neural networks are capable of performing multiple actions simultaneously, speeding up workflows for machines and humans alike. And with computational power increasing exponentially over the years, neural networks can process even more data than before.

Neural Networks vs. Traditional Algorithms

Should you use neural networks or traditional machine learning algorithms? It’s a tough question to answer because it depends heavily on the problem you are trying to solve. Consider the “no free lunch theorem,” which roughly states there is no “perfect” machine learning algorithm that will perform well at any problem. For every problem, a certain method is suited and achieves good results, while another method fails heavily.

Personally, I see this as one of the most interesting aspects of machine learning. It’s the reason why anyone working in the field needs to be proficient with several algorithms and why getting our hands dirty through practice is the only way to become a good machine learning engineer or data scientist. That said, helpful guidelines on how to better understand when you should use which type of algorithm never hurts.

The main advantage of neural networks lies in their ability to outperform nearly every other machine learning algorithm. But deciding whether to use neural networks or not depends mostly on the problem at hand. In cancer detection, for example, a high performance is crucial because the better the performance the more people can be treated. But there are also machine learning problems where a traditional algorithm delivers a more than satisfying result.

When to Use Neural Networks

If you’re working with complex data like image data, then neural networks are better-suited for processing this data than machine learning algorithms. Large volumes of unlabeled data also call for neural networks, which perform at a higher level when they have access to more data.

And since neural networks require more data to be effective, you may find them useful only if you’re able to take your time solving an advanced data problem. If you’re dealing with a simple data set or need more immediate analysis, neural networks may take too long to train as opposed to more traditional machine learning algorithms.

At the end of the day, neural networks are great for some problems and not so great for others. In my opinion, neural networks are a little over-hyped at the moment and the expectations exceed what can be really done with it, but that doesn’t mean it isn’t useful. We’re living in a machine learning renaissance and the technology is becoming more and more democratized, which allows more people to use it to build useful products. There are a lot of problems out there that can be solved with machine learning, and I’m sure we’ll see progress in the next few years.

One of the major problems is that only a few people understand what can really be done with it and know how to build successful data science teams that bring real value to a company. On one hand, we have PhD-level engineers that are geniuses in the theory behind machine learning, but lack an understanding of the business side; on the other, we have CEOs and people in management positions that have no idea what can be really done with neural networks, but think they will solve all the world’s problems in short time. We need more people who bridge this gap, which will result in more products that are useful for our society.

Frequently Asked Questions

What is a neural network?

A neural network is a method of learning that enables computers to process data in a way that mimics the human brain. Neural networks consist of collections of nodes that pass data between each other, giving machines the ability to learn from past experiences and improve their performance over time.

Why are neural networks considered inefficient?

Neural networks require much larger volumes of data than traditional machine learning algorithms to learn and become proficient in a certain task. This creates a longer training process, which may not be worth it depending on the type of problem or situation.