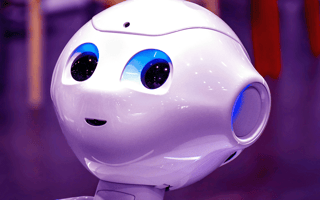

Advances in artificial intelligence and computer vision have given us social robots, many of which are able to read our facial expressions and know if we’re frustrated, worried, happy or excited — without the empathy and true emotional understanding that keeps them from becoming sentient.

What Is a Social Robot?

A social robot is a robot capable of interacting with humans and other robots. Social robots are developed using artificial intelligence and are often equipped with sensors, cameras, microphones and other technology so they can respond to touch, sounds and visual cues much like humans would.

What Is a Social Robot?

Social robots are artificial intelligence platforms paired with sensors, cameras, microphones and other technology, like computer vision, so they can better interact and engage with humans or other robots. They come in many different shapes and sizes, from human-like faces on static pedestals to furry, tail-wagging puppies.

What most people think of when they think of a social robot is a robot that is capable of communicating and understanding intent and mood, much like humans are able to do, according to Alexander Kernbaum, interim director of SRI International’s Robotics Laboratory. But there are so many ways robots can do that, whether that’s by deciphering facial expressions and countering with a smile, or tracking humans with their eyes to prove they’re paying attention.

How Are Social Robots Used?

Despite their lack of consciousness, social robots provide companionship and emotional and learning support to children and adults who play, talk and even snuggle with them like they would a pet. They also work together in warehouses and factories to transport goods, and are used as research and development platforms, allowing researchers to study how humans interact with robots and make even further advances in the field.

Social robots are most often found working as companions and support tools for childhood development, specifically autism therapy and social-emotional learning. Social robot pets can even be an effective form of therapy for people with dementia.

Social robots also work as concierges in hotels and other settings like malls, where they provide customer service.

And depending on how loose one’s definition of a robot is, social robots have become even more personal. When not living in our pockets, smartphones use built-in social artificial intelligence tools like Siri to help us avoid traffic jams, compose texts and add events and meetings to our calendars.

Social Robot Examples

Whether in the workplace, on our streets or working with loved ones, social robots continue to evolve. Here are a few examples of social robots being used today.

Furhat by Furhat Robotics

Furhat is a customizable social robot that’s used for prototype and application development. Researchers and developers are able to update Furhat’s code to test out verbal and non-verbal response modes. It can also be outfitted with different masks to represent a variety of human likenesses across age, gender and race. Furhat can even depict dogs and anime characters. More than 200 voices are also available.

According to Furhat Robotics’ website, their social robot is also capable of communicating through facial expressions, head movements and eye gaze, and can even raise its eyebrows for added emphasis during conversation. Equipped with computer vision for face tracking, Furhat can analyze facial expressions and interact with as many as 10 people at the same time.

Jennie by Tombot

Jennie is a robotic dog that’s used to provide emotional support to people living with health conditions like dementia, helping to minimize the effects of depression, anxiety and loneliness. Equipped with touch sensors and voice command software, Jennie is a social robot that’s modeled after a golden Labrador retriever puppy. It can wag its tail and make real puppy sounds.

Misty by Misty Robotics

Misty is a programmable mobile social robot developed by Misty Robotics (acquired by Furhat in 2022) that has been used to perform temperature screenings at workplaces and healthcare settings, and is now taking on a greater role in education settings. Using its eyes and sounds, Misty is capable of expressing a range of emotions, from joy to sadness. The robot’s head, neck and arms also move, allowing it to communicate curiosity or excitement. It responds to touch and is able to recognize and remember people and detect obstacles.

Moxie by Embodied

Deemed by Time as one of the best inventions of 2020, Moxie is a social robot that supports social-emotional learning in children by engaging them in play-based activities. Developed by Embodied, Moxie is designed for children around the ages of 5 to 10 and is able to respond to conversation, eye contact and facial expressions. It’s also capable of remembering people, places and things.

Orbit

Orbit is a small social robot developed by Ben Powell, a graduate of Loughborough University in England, to help children with autism develop social skills through storytelling, physical touch and engagement, as well as visual communication like facial expressions. According to a Loughborough University press release, Powell, who has been diagnosed with mild, high-functioning autism, created Orbit so children could “see emotions in context and develop social skills independently.”

“By giving Orbit a personality, children can build a connection with the robot and then empathize with it,” Powell said in the release. “This will teach the user social appropriateness and help them recognize how their actions may make the robot feel — i.e., if they press Orbit too hard or hit it, the robot will look sad or scared.”

QTrobot by LuxAI

QTrobot is another social robot used by educators and families looking to support children with autism, at school and at home. According to LuxAI’s website, QTrobot is able to boost attention, engagement and concentration while reducing anxiety and overstimulation in a learning environment. LuxAI has also developed a research and development platform, QTrobot V2, which is equipped with 3D cameras, high-quality microphones, text-to-speech, skeleton tracking along with emotion and gesture recognition — which can be purchased by institutions and researchers for the study of social robotics and human-robot interaction.

Abi by Andromeda Robotics

Designed to be a robotic companion, Abi uses OpenAI cameras and a humanoid frame to navigate her surroundings. A GPT-3-powered dialogue engine and machine learning give Abi the ability to remember faces, convey emotions and sustain conversations. In September of 2023, Abi began serving in aged care facilities in several Australian states as part of a trial conducted by Andromeda Robotics in partnership with Allity.

The Future of Social Robots

Design will be one key to improving human-robot interaction through common human-like indicators we’re already familiar with, which will not only increase safety, but also help when it comes to managing human expectations of robots.

But managing expectations can be a complex design problem, according to Brad Porter, founder and CEO of Collaborative Robotics, a company developing a collaborative robot, or cobot, for use in industrial settings, where Porter believes human-robot interactivity and social awareness play an important role. Geared toward children — or in other social support settings — a social robot has to behave and interact differently than if it’s in a workplace, where expectations are much different.

“If you make your robot look like a human-like cartoon character, then you kind of expect that it has child-like behaviors and interaction ability,” Porter said. “That expectation could be quite hard to achieve, because children are unique and funny and creative, and trying to endow all that into a robot can be very hard.”

Besides design, artificial intelligence will also be critical to the future of social robots. Researchers at Brown University’s Humans to Robots Laboratory have been working on an AI system that enables navigation robots to understand commands in English. This would save humans from having to deliver commands in code and simplify human-robot communication in the workplace and possibly other settings.

Kernbaum also believes it’s important to consider what artificial intelligence — and the robotic platforms we ultimately house it in — can do better than humans given their tremendous access to data. “I’m not worried about them taking our jobs,” Kernbaum said. “But there are going to be things that they’re better at and maybe we want to focus on those.”

AI-powered social robots are showing promise when it comes to working as personalized learning and support tools in situations like helping children learn a second language and building communication skills among kids with autism. But like in other areas of robotics, it’s not that social robots are always able to perform a specific task better than a human counterpart, it’s that they are able to do so consistently.

It’s also not just how social robots will evolve, but how humans evolve alongside social robots that will likely determine their future. While humans can connect with social robots through the robots’ design and intelligence, it remains to be seen whether humans can integrate robots into their daily lives and use them to facilitate relationships with other humans.

Frequently Asked Questions

What is a social robot used for?

Social robots provide social and emotional support to children with learning disabilities and dementia patients undergoing therapy. These robots also perform workplace tasks like moving goods and serving in customer-facing roles.

What is the most famous social robot?

Sophia is perhaps the most famous social robot, appearing in music videos, on the cover of Cosmopolitan and even multiple times on The Tonight Show Starring Jimmy Fallon.

Who was the first social robot?

Kismet was the first social robot. Designed by Cynthia Breazeal — a graduate student at the MIT Media Lab at the time — in the late 1990s, Kismet displayed facial expressions to communicate with humans and served as a landmark moment for the field of social robotics.

Is Siri a social robot?

While Siri possesses the social intelligence to engage in basic conversations with humans, it is classified as a virtual assistant rather than a social robot.