Every organization runs on data: customer records, product catalogs, financial accounts, supplier lists. But when the same customer appears five different ways across five different systems or when two teams are working from conflicting versions of the truth, the cracks start to show. Master data management (MDM) is how companies fix that.

The biggest misconception about MDM is that it’s a data problem. In reality, it’s an ownership problem disguised as a data problem. This matters most for organizations operating across multiple systems, products and data domains where consistency is no longer optional.

Master Data Management (MDM) Defined

Master data management (MDM) is the discipline, as well as the set of tools and processes, for ensuring that an organization’s master data — the core, non-transactional data that represents the key business entities an organization operates around — is accurate, consistent, deduplicated and authoritative across all the systems that use it. In practice, MDM means establishing a single, trusted version of each core business entity and making that version the canonical reference point for every application, report and workflow in the organization.

What Is Master Data?

Before defining MDM, it helps to understand what “master data” actually means.

Master data is the core, non-transactional data that represents the key business entities an organization operates around. Think of it as the nouns of your business:

- Customers: Names, contact details, account identifiers.

- Products: SKUs, descriptions, pricing tiers.

- Employees: HR records, roles, org hierarchy.

- Locations: Offices, warehouses, delivery addresses.

- Suppliers and Partners: Vendor IDs, contracts, payment terms.

This data is distinct from transactional data (e.g., a sales order, a payment event, a login session), which records what happened. Master data describes who and what is involved. Both matter, but master data is the shared reference that makes transactional data meaningful and consistent across systems.

What Is Master Data Management?

Master data management (MDM) is the discipline, as well as the set of tools and processes, for ensuring that an organization’s master data is accurate, consistent, deduplicated and authoritative across all the systems that use it.

In practice, MDM means establishing a single, trusted version of each core business entity and making that version the canonical reference point for every application, report and workflow in the organization. When a customer changes their address, that update flows everywhere. When two records turn out to be the same person, MDM resolves the duplicate. When a new system is onboarded, it pulls from the same governed source rather than reinventing its own representation.

MDM sits at the intersection of governance, quality and integration. It is not just a technology purchase; it requires organizational commitment to who owns which data, how disputes are resolved and how quality is enforced over time.

How Does Master Data Management Work?

At its core, MDM works through four interconnected processes:

1. Data Consolidation and Ingestion

MDM starts by pulling master data from all the source systems where it currently lives: a CRM, an ERP, a billing platform, a legacy database. This ingestion step provides the full picture of what data exists and where, often for the first time.

2. Matching and Deduplication

Once data from multiple sources is collected, the MDM system runs matching algorithms to identify records that represent the same real-world entity. MDM uses deterministic rules (exact field matches), probabilistic scoring (fuzzy matching on name + address + phone), and increasingly, machine learning models to make these link decisions at scale.

3. Golden Record Creation

Once duplicates are identified, MDM creates a golden record. This is a single, authoritative master record that merges the best attributes from each source. This might mean taking the most recently updated address from one system while pulling the validated account ID from another. The golden record becomes the source of truth.

4. Distribution and Synchronization

The master record is then published back out to consuming systems, either in batch or in real time. When a downstream application queries customer data, it receives the governed, deduplicated version rather than its own local copy. Changes made in any connected system feed back through MDM, triggering re-evaluation and redistribution as needed.

MDM Implementation Styles

Organizations implement MDM in a few different architectural patterns depending on their needs:

Consolidation Style

Data from source systems is merged into a central MDM hub. Source systems are not modified; the hub is a read-only reference layer.

Registry Style

The MDM system maintains a cross-reference index — knowing that record #123 in System A is the same as record #456 in System B — without storing a full copy. This is lightweight but limited.

Coexistence Style

The MDM hub creates golden records, but source systems retain their own copies. The hub syncs updates back to sources, keeping both current.

Centralized (Authoritative) Style

The MDM hub becomes the system of record. All reads and writes flow through it. This approach offers the highest consistency, but the most architectural commitment.

Most enterprise MDM programs blend these styles across different data domains depending on how much control the organization can realistically exert over each system.

MDM in Modern Enterprise Architecture

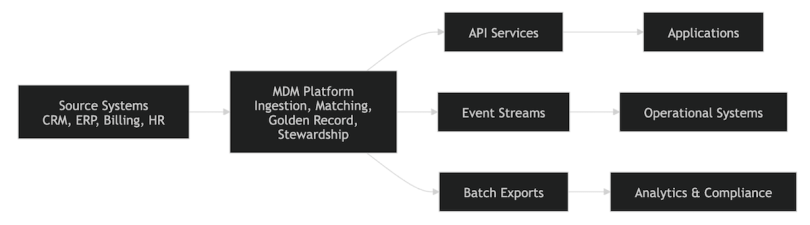

At a high level, a modern MDM architecture looks like this:

Source Systems (CRM, ERP, Billing, HR) feed into an MDM platform, which is responsible for ingestion, matching, golden record creation and stewardship. From there, master data flows out through three channels: API services (consumed by applications in real time), event streams (consumed by operational systems via Kafka or equivalent) and batch exports (consumed by analytics pipelines and compliance systems).

This architecture reflects how modern MDM has evolved. Rather than a single integration path, the MDM layer serves as a platform that different consumers access in the way that fits their latency and access patterns: real-time APIs for transactional systems, event streams for operational propagation and batch exports for analytical workloads.

But this is only half the picture. Modern enterprise architecture has further complicated — and improved — the MDM model.

MDM Vs. Data Mesh

Data mesh advocates for domain-oriented data ownership, where each business domain (payments, identity, product catalog) owns and publishes its own data as a product rather than feeding a central platform. This creates real tension with traditional MDM, which assumes a central hub with centralized authority.

The resolution most mature organizations are reaching is a hybrid model: domain teams own their data products and govern their local master data, while a central MDM layer establishes shared standards, cross-domain identity resolution and compliance guarantees. The data mesh handles distribution and autonomy; MDM handles consistency and trust. They are not competitors. Instead, they are complementary layers.

API-First Master Data Services

Rather than a monolithic MDM hub that other systems integrate with via batch ETL, modern MDM increasingly exposes master data as first-class APIs — RESTful, gRPC or GraphQL services that any application can query at runtime. This means:

- Downstream consumers get real-time, authoritative data rather than stale overnight snapshots

- The MDM layer becomes a platform service, not an integration bottleneck

- Golden records can be versioned and queried with point-in-time accuracy for audit and compliance.

Event-Driven Propagation

When a golden record changes — say a customer updates their address, a product is deprecated or a supplier is merged — that change needs to propagate across all consuming systems immediately. Modern MDM architectures use event streaming (Apache Kafka, AWS Kinesis, Google Pub/Sub) to publish master data change events. Downstream services subscribe to these streams and update their local state in near-real-time, rather than waiting for nightly batch syncs.

This shift from batch (pull) to event-driven (push) propagation reduces latency from hours to seconds while decoupling producers from consumers and improving overall system resilience.

Domain Ownership Vs. Centralized Governance

The most important architectural question in enterprise MDM today is not which tool to use; it’s who is accountable for data quality.

Centralized governance works well for standards, policies and cross-domain identity resolution. But trying to centralize operational data stewardship, where every correction flows through a single team, creates a bottleneck that cannot scale. The emerging pattern is federated stewardship: domain teams own quality for their own data products, a central MDM platform enforces shared contracts (schemas, ID formats, required fields), and a governance council resolves cross-domain conflicts.

In practice, MDM is evolving from a centralized hub toward a hybrid approach that combines domain ownership with centralized governance standards. Instead of a single monolithic system, organizations expose master data as APIs and event streams, enabling real-time synchronization across services while preserving domain autonomy.

Key Components of an MDM System

A production MDM implementation typically involves:

Data Quality Engine

Rules and routines that cleanse, standardize and validate incoming data (normalizing phone number formats, validating postal codes, flagging missing required fields).

Matching Engine

Algorithms that identify duplicate or related records across sources, configurable by data domain.

Stewardship Workflow

A UI or process for human data stewards to review ambiguous matches, resolve conflicts and make authoritative decisions when automation cannot.

Golden Record Store

The persistent store of master records with full lineage, tracking which source system contributed which attribute.

Integration Layer

APIs, event streams or batch exports that publish master data to consuming systems.

Governance Metadata

Ownership definitions, data dictionaries and audit logs that track what changed, when and by whom.

Benefits of Master Data Management

Poor master data is estimated to cost organizations millions annually in reconciliation costs, failed integrations and compliance risk. When done well, MDM eliminates that drag and delivers compounding value across the organization.

Operational Efficiency

Teams stop wasting time reconciling conflicting data. A single customer record means a single support ticket, not three.

Better Analytics

Reports built on clean, consistent master data produce reliable results. Bad master data is one of the most common root causes of dashboard inconsistencies.

Regulatory Compliance

Regulations like GDPR require organizations to honor data subject requests across all systems. MDM makes it possible to find and action every record tied to a given individual.

Customer Experience

A unified customer profile enables personalization, prevents duplicate outreach and ensures every touchpoint reflects the same understanding of the customer.

Reduced Integration Cost

When every system speaks the same master data language — the same customer ID, the same product code — integration projects become dramatically cheaper.

Common Challenges in MDM

MDM projects are notoriously difficult not because of the technology, but because of the organizational dynamics involved.

Data Ownership Disputes

When multiple systems each claim to be the authoritative source, reaching consensus on who governs the golden record is a political challenge as much as a technical one.

Data Quality Debt

Ingesting years of dirty, inconsistent data requires significant up-front investment in quality rules and stewardship capacity.

System Proliferation

In large enterprises, the number of systems holding copies of master data can be surprisingly large. Full coverage requires sustained discovery and integration effort.

Change Management

Source system teams must accept that their local copy is no longer the source of truth, which is a shift that requires executive sponsorship and clear incentives.

MDM in Practice

Consider a financial services company offering banking, investing and lending through separate platforms built independently over the years. A customer appears as three separate records with different account IDs, slightly different names and different addresses. When they call support, the agent sees fragments. When compliance needs a unified view, it requires manual reconciliation.

With MDM, the company establishes a single customer master. A matching process links the three records. A golden record is created with the verified identity, consolidated contact details and a canonical customer ID that all three platforms adopt. The support agent now sees a complete view. Compliance queries become straightforward. Regulatory reporting covers the full relationship without manual joins.

At Coinbase, data at scale presents similar challenges: the same user interacting across multiple products, wallets and regions needs to be understood as a single entity for compliance, support and product continuity. MDM principles — unified identity, governed master records, synchronized downstream systems — are foundational to operating responsibly at that scale.

Get Started With Master Data Management

Master data management is not glamorous infrastructure, but it is foundational. It allows organizations to trust the data underlying their decisions, comply with regulatory requirements and deliver coherent experiences to customers and partners.

The technical work of matching, deduplication, and distribution is solvable. The harder work, like deciding who owns the data, what the rules are, and how disputes get resolved, is what separates organizations with reliable data from those perpetually fighting fires caused by fragmented, inconsistent records.

In an environment where decisions are only as good as the data behind them, MDM is not optional. It’s the foundation that makes data trustworthy, systems interoperable and scaling sustainable.

The AI Behind This Article

As I put this piece on master data management together, I leaned on AI in the same way I do in my day-to-day work at Coinbase: as a fast, reliable assistant and an extra reviewer, not a stand-in for judgment. The overall argument, examples. and architectural framing are mine. In particular, the discussions of data mesh, event-driven propagation and federated stewardship come directly from how I think about building modern data platforms. I used AI primarily to probe the outline, sharpen explanations and sanity-check the technical details.

That mirrors how I work at Coinbase. AI helps accelerate the groundwork, drafting, summarizing and iterating, so I can spend more time on the decisions that actually require human judgment: designing systems that are both scalable and trustworthy, tackling data quality at the scale of millions of users and building infrastructure that compliance, engineering, and product teams can depend on together.

If you’re interested in joining an AI-forward company that’s building the financial system of the future, you can explore open roles on Coinbase’s Built In profile.

Frequently Asked Questions

Why is master data management important?

MDM is important because it ensures that organizations operate on a consistent and trusted version of their core data. Without it, teams often work with fragmented and conflicting information, leading to inefficiencies, inaccurate reporting and poor customer experiences. At scale, MDM also becomes essential for regulatory compliance, system interoperability and reducing the cost and complexity of integrations.

What types of data are managed in MDM?

Master data management focuses on the core business entities that are shared across systems. This typically includes customers, products, employees, suppliers and locations. These entities are relatively stable compared to transactional data, but they are critical because they provide the context that makes transactions meaningful and consistent across applications.

What is a golden record in MDM?

A golden record is the single, authoritative representation of a business entity within an MDM system. It is created by merging and reconciling data from multiple source systems, selecting the most accurate and complete attributes from each. The golden record becomes the trusted source of truth that downstream systems rely on for consistent and reliable data.

How does MDM differ from data warehousing or data lakes?

While data warehouses and data lakes are designed for storing and analyzing large volumes of data, MDM focuses on maintaining the accuracy and consistency of core business entities. MDM systems create and govern golden records that operational systems rely on, whereas data platforms are typically used for analytics and reporting. In modern architectures, MDM often complements these systems by ensuring that the underlying data they consume is clean and consistent.