As data scientists, one of our jobs is to create the whole design for any given machine learning project. Whether we’re working on a classification, regression or deep learning project, it falls to us to decide on the data preprocessing steps, feature engineering, feature selection, evaluation metric, and the algorithm as well as hyperparameter tuning for said algorithm. And we spend a lot of time worrying about these issues.

All of that is well and good. But there are a lot of other important things to consider when building a great machine learning system. For example, do we ever think about how we will deploy our models once we have them?

I have seen a lot of machine learning projects, and many of them are doomed to fail before they even begin as they don’t have a set plan for production from the onset. In my view, the process requirements for a successful ML project begins with thinking about how and when the model will go to production.

1. Establish a Baseline at the Outset

I hate how machine learning projects start in most companies. Tell me if you’ve ever heard something like this: “We will create a state-of-the-art model that will function with greater than 95 percent accuracy.” What about this: “Let’s build a time series model which will give an RMSE that’s close to zero.” Such an expectation from a model is absurd because the world we live in is indeterministic. For example, think about trying to create a model to predict whether or not it will rain tomorrow or if a customer would like a product. The answer to these questions may depend on a lot of features we don’t have access to. This strategy also hurts the business because a model that is unable to meet such lofty expectations usually gets binned. To avoid this kind of failure, you need to create a baseline at the start of a project.

So what is a baseline? It’s a simple metric that helps us to understand a business’ current performance on a particular task. If the models beat or at least match that metric, we are in the realm of profit. If the task is currently done manually, beating the metric means we can automate it.

And you can get the baseline results before you even start creating models. For example, let’s imagine that we’ll be using RMSE as an evaluation metric for our time series model and the result came out to be X. Is X a good RMSE? Right now, it’s just a number. To figure that out, we need a baseline RMSE to see if we are doing better or worse than the previous model or some other heuristic.

The baseline could come from a model that is currently employed on the same task. You could also use a simple heuristic as a baseline. For instance, in a time series model, a good baseline to aim to defeat is last day prediction, i.e., just predicting the number on the previous day and calculating a baseline RMSE. If your model is not able to beat even this naive criteria, then we know for sure your model is not adding any value.

Or how about an image classification task? You could take 1,000 labeled samples, have humans classify them, and then human accuracy can be your baseline. If a human is not able to get a 70 percent prediction accuracy since the task is highly complex (perhaps there are numerous classes in which to classify) or the task is pretty subjective (as in predicting emotion based on a person’s face), you can always automate the process once your models reach a similar level of performance as a human.

Try to be aware of the performance you’re going to get even before you create your models. Setting some pie-in-the-sky, out-of-this-world expectations is only going to disappoint you and your client and stop your project from going to production.

2. Continuous Integration Is the Way Forward

So now you’ve created your model, and it performs better than the baseline or your current model on your local test data set. Should you go forward to production? At this point, you have two choices:

- Go into an endless loop of improving your model further: I have seen countless examples where a business would not consider changing the current system and would ask to get the best performant system before they really pushed the new system to production.

- Test your model in production settings, get more insights about what could go wrong, and then continue improving the model with continuous integration.

I am a fan of the second approach. In his awesome third course in the Coursera Deep Learning Specialization, Andrew Ng says :

Don’t start off trying to design and build the perfect system. Instead, build and train a basic system quickly — perhaps in just a few days. Even if the basic system is far from the “best” system you can build, it is valuable to examine how the basic system functions: you will quickly find clues that show you the most promising directions in which to invest your time.

Our motto should be that done is better than perfect.

If your new model is better than the model currently in production or your new model is better than the baseline, it doesn’t make sense to wait to go to production.

3. Make Sure to A/B Test

But is your model really better than the baseline? Sure, it performed better on the local test data set, but will it work well on the whole project in the production setting?

To test the validity of the assumption that your new model is better than the existing one, you can set up an A/B test. Some users (the test group) will see predictions from your model while some other users (the control group) will see the predictions from the previous model. In fact, this is the right way to deploy your model. And you might find that, indeed, your model is not as good as it seems.

Keep in mind that the model being inaccurate is not really wrong. What’s wrong is to not anticipate that you could be wrong. The fastest way to truly destroy a project is by stubbornly neglecting to confront your own fallibility.

Pointing out the precise reason for your model’s poor performance in production settings can be difficult, but some causes could be:

- You might see the data coming in real-time to be significantly different from the training data, i.e., the training and real-time data distribution is different. This could happen with ad classification models in which preferences change over time.

- You may not have done the preprocessing pipeline correctly, meaning you have incorrectly included some features in your training data set that will not be available at the production time. For example, you might add a variable called “COVID Lockdown(0/1)” in your data set. In a production setting, though you may not know how long the lockdowns will remain in effect.

- Maybe there is a bug in your implementation that even the code review was not able to catch.

Whatever the cause, the lesson is that you shouldn’t go into production with a full scale. A/B testing is always an excellent way to go forward. And if you find that your model is flawed, have something ready to fall back upon, like perhaps an older model. Even if it’s working well, things might break that you couldn’t have anticipated, and you need to be prepared.

4. Your Model Might Not Even Go to Production

Let’s imagine that you’ve created this impressive machine learning model. It gives 90 percent accuracy, but it takes around 10 seconds to fetch a prediction. Or maybe it takes a lot of resources to predict.

Is that acceptable? For some use-cases maybe, but most likely no.

In the past, many Kaggle competition winners ended up creating monster ensembles to take the top spots on the leaderboard. Here is a particularly mind-blowing example that was used to win an Otto classification challenge on Kaggle:

Another example is the Netflix Million Dollar Recommendation Engine Challenge. The Netflix team ended up never even using the winning solution due to the engineering costs involved. This sort of thing happens all the time: the cost or engineering efforts of putting a complex model into production is so high that it is not profitable to go forward.

So how do you make your models accurate yet easy on the machine?

Here, the concept of teacher-student models, or knowledge distillation, becomes useful. In knowledge distillation, we train a smaller student model on a bigger, already trained teacher model. The main aim here is to mimic the teacher model, which is the best model we have, with a student model that has way fewer parameters. You can take the soft labels/probabilities from the teacher model and use it as the training data for the student model. The intuition behind this is that the soft labels are much more informative than the hard labels. For example, a Cat/Dog teacher classifier might say that the probabilities for the classes are cat 0.8, dog 0.2. Such a label is more informative as then the student classifier would know that the image is of a cat, but it slightly also resembles a dog. Or, if the probabilities of both are similar, our student classifier would also learn to mimic the teacher and become less confident about the particular example.

Another way to decrease run times and resource usage at prediction time is to forego a little bit of accuracy and performance by going with simpler models. In some cases, you won’t have a lot of computing power available at prediction time. Sometimes, you will even have to predict on edge devices as well, and so you want to have a lighter model. You can either build simpler models or try using knowledge distillation for such use cases.

5. Maintenance and Feedback Loop

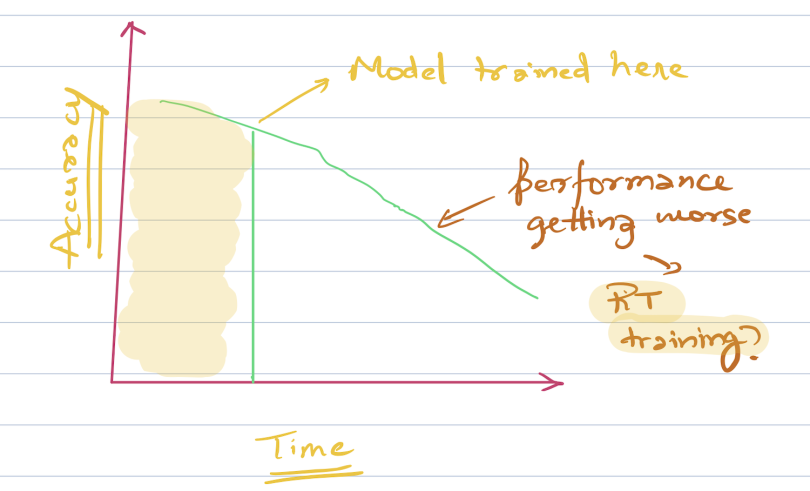

The world is not constant and neither are your model weights. The world around us is rapidly changing, and what was applicable two months ago might not be relevant now. In a way, the models you build are reflections of the world, and if the world is changing, your models should be able to reflect this change.

Model performance typically deteriorates with time. For this reason, you must think of ways to upgrade your models as part of the maintenance cycle at the onset itself.

The frequency of this cycle depends entirely on the business problem that you are trying to solve. For example, with an ad prediction system in which the users tend to be fickle and buying patterns emerge continuously, the frequency needs to be pretty high. By contrast, in a review sentiment analysis system, the frequency need not be that high as language doesn’t change its structure quite so often.

I would also like to acknowledge the importance of the feedback loop in a machine learning system. Let’s say that you predicted that a particular image is a dog with low probability in a dog versus cat classifier. Can we learn something from these low confidence examples? You can send it to manual review to check if it could be used to retrain the model or not. In this way, you train your classifier on instances it is unsure about.

When thinking of production, come up with a plan for training and maintaining and improving the model using feedback as well.

Conclusion

These are some of the things I find important before thinking of putting a model into production. Although this is not an exhaustive list of things that you need to think about and problems that could arise, let it act as food for thought for the next time you create a machine learning system. For more on data science and machine learning, follow me here or subscribe here.