Self-driving cars are vehicles that use sensors and artificial intelligence to transport people on roads autonomously without human input. Self-driving cars are also known as autonomous vehicles or driverless cars.

Do Self-Driving Cars Actually Exist?

SAE International (the Society of Automotive Engineers) established six levels of vehicular autonomy, ranging from level zero — our daily-use utility vehicles — to level five, where vehicles no longer require human input to operate effectively. Currently, we are far from a level five automation since there are many situations with which autonomous vehicles cannot yet cope.

How Do Self-Driving Cars Work?

The main goal of self-driving cars is to drive from point A to point B safely. To do this they operate a pre-established map. We call this map a high-definition map or HD Map. Self-driving vehicles use a combination of radar, video cameras, LiDAR and GPS to locate themselves and surrounding vehicles within their HD Map.

Radar and LiDAR

Radar is a sensor that uses radio waves to detect close objects, whereas LiDAR uses light waves to detect objects that are further away with great precision. That said, since LiDAR uses light waves, it can be susceptible to fog, whereas radar is not. In addition, self-driving cars use video cameras to detect and track traffic lights, road signs and pedestrians.

GPS

GPS provides a course and precise geographic location of the vehicle (longitude, latitude and elevation), which can be fine-tuned when combined with the input from all other sensors. GPS sensors don’t tend to work well in tunnels, nevertheless a self-driving car can compensate for this with input from other sensors.

Ultrasonic Sensors

Self-driving cars also use ultrasonic sensors for close-range object detection. Ultrasonic sensors are cost effective and aren’t negatively affected by environmental factors. On the other hand, they have low resolution and a very close range. We normally use ultrasonic sensors for emergency brake assistance and to check blind spots.

While we can place more sensors in the car wheels, doors, front and back, this is ultimately dependent upon the manufacturer.

Self-driving cars use all the input from their sensors to locate themselves within an HD map and generate a route toward a destination. A route generated in an HD map contains much more data than a regular GPS route. For example, an autonomous vehicle will know when it needs to change lanes, where traffic lights are supposed to be and even road conditions.

Since HD maps can contain so much information, they may need a lot of maintenance. Some companies such as TomTom (International), Blickfeld (Germany), Civil Maps (U.S.A.) and many others, have found commercial value in maintaining proprietary HD maps.

Are Self-Driving Cars Safe?

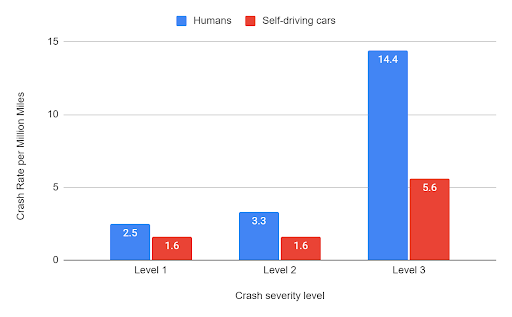

Experts believe self-driving cars can achieve higher levels of safety than human driven vehicles. In fact, when we analyze and compare accident data of self-driving cars versus traditional cars and adjust it to “Crash rate per million miles driven,” self-driving cars always end up with a lower accident rate.

The figure below uses the SHRP 2 NDS data set and crash levels from one to three, where level one is the worst type of crash, which involves airbag deployment and injury. For level two crashes there is no airbag deployment or injury, however there should be at least $1,500 in damage. Level three crashes involve physical conflict with another object or vehicle, but with physical damage that does not meet level one or two.

However, public opinion falls on the other side in this matter. Users expect self-driving vehicles to be as close to perfection as possible. When self-driving cars from companies like Waymo and Tesla become involved in accidents, we get a reminder that, despite wanting autonomous vehicles to never become involved in an accident, that’s almost statistically impossible, especially when they share the road with human drivers.

Components of a Self-Driving Car

We can identify three core components that make a vehicle autonomous: a high-definition map (HD Map), a state and geolocation estimator and a motion manager.

1. HD Map (High-Definition Map)

The very first thing a self-driving car needs is the ability to detect its location in the world. To achieve this, an autonomous vehicle needs to have an HD map that includes plenty of data about the road and the surroundings.

HD maps help with the management of lateral, longitudinal and speed control; these three aspects of autonomous driving allow the car to regulate speed as well as change lanes safely. Thanks to HD maps, self-driving cars always know in which lane they are located throughout an established route, which includes all necessary lane changes they will eventually need.

There are specific companies who dedicate their efforts to create and maintain their HD maps. One good example is TomTom, which offers the TomTom HD Map. TomTom’s proprietary HD Map offers accuracy down to a few centimeters and helps sensors understand their surroundings.

2. State and Geolocation Estimator

State estimators coordinate the input from all the sensors in the autonomous vehicle and keep the vehicle’s geolocation within the HD map up-to-date. The state estimator does this by receiving input and aggregating data from all different parts of the vehicle.

Different situations might favor different sensors. For example, if the vehicle is inside a tunnel the GPS signal might not be reliable and the state estimator might have to rely on other sensors such as LiDAR, radar and the tires’ motion to update the vehicle’s geolocation.

At the same time, on a highway or motorway, a truck might be in front of the vehicle blocking the LiDAR sensor from perceiving the road ahead. In this situation, our self-driving car will be unable to see what’s ahead. Nevertheless, with a reliable HD map and GPS signal, our vehicle can have a good idea of what lies ahead of it (whether it be the next junction or exit).

Ultimately, a state estimator will receive and combine data from multiple sensors within the autonomous vehicle. Not all sensors send data at the same rate. A LiDAR system can provide many pulsations per millisecond while GPS takes longer to update. The state estimator unifies values from various inputs.

3. Motion Manager

The motion planner is in charge of the movement. What’s more, a motion planner is where the artificial intelligence operates the vehicle based on the pre-established vehicle’s route. If we intend to move a self-driving car from point A to B, the first option might be going forward (or reversing or turning). The motion planner is in charge of determining which maneuvers are required for the vehicle to reach its destination.

Just as the state estimator helps the vehicle know when there’s an obstacle obstructing the vehicle’s route, the motion planner is in charge of calling for an emergency stop. Similarly, when it’s time for the vehicle to change lanes, the motion planner calls a maneuver for switching lanes.

Benefits of Self-Driving Cars

Once we achieve a high level of autonomy for self-driving cars it will become a matter of changing our mentality and learning to rely on these vehicles. Here are some of the benefits we can achieve as a society that embraces self-driving cars.

Reduced Accident Rates

One major feature in autonomous vehicles is connectivity. Having connected autonomous vehicles on the road means that vehicles can communicate issues on the road or nearby accidents with each other. Even without connectivity, a road full of autonomous vehicles means a road without tired, distracted or drunk drivers.

Improved Mobility for the Older People and People With Disabilities

A world where self-driving cars are the norm would mean that seniors and those with disabilities would be less limited in terms of transportation and accessibility. Those who are unable or unqualified to drive for any number of reasons would be able to fetch their own groceries or get to their doctor appointments without worry.

Self-Driving Cars-as-a-Service

If you need a car today, you not only need to buy or lease the car, but you also need a garage or parking space, not to mention the fact that you need parking anywhere you take your car. You’ll also need insurance and gas. These expenses add up quickly.

This used to be the case with web servers. If you wanted to develop a business that required a web app or back-end service you needed to spend a lot of money on a server. Cloud computing brought to us the idea of SaaS. With SaaS products, you pay less money up front, get the primary product you want and let the company worry about all the infrastructure details. So, if you want a server only for one hour every day, you only pay for one hour every day. That is the business model behind Google Cloud Platform and Amazon Web Services.

In the future, access to autonomous vehicles might mean that we can request a car just like we request Ubers. Imagine a world in which you pay an autonomous fleet service fee to carpool to work or large entertainment events. It’s like public transit but more precise and (potentially) accessible. The price of these services could potentially become so competitive in urban areas that we lose the necessity of owning a car and instead we buy the service of a car only for as long as we need it.

Challenges of Self-Driving Cars

There are two technical challenges facing self-driving cars with regard to achieving the maximum level of autonomy, namely motion sickness and accident liability.

Motion Sickness

Motion sickness occurs when the movement you see is different to what your inner ear expects. This happens to some people when attempting to read a book in a moving vehicle. There are two factors that increase the chance of motion sickness in autonomous vehicles. First, if you’re unable to anticipate where and when the vehicle moves, you could develop motion sickness. Secondly, if you don’t keep your eyes in the area of motion, you might easily develop motion sickness.

Accident Liability

Within the context of self-driving cars, accident liability refers to the person liable for an accident caused by a self-driving vehicle. As we get closer to the highest level of autonomy, newer designs for autonomous vehicles will not include a dashboard, steering wheel or brake pedals. If a car doesn’t receive any human input, it becomes much more difficult for law enforcement agencies and insurance companies to determine liability. State and federal legislators will need to get involved to determine how we decide liability between the car manufacturer and the autonomous vehicle’s occupants.

History of Self-Driving Cars

The idea of self-driving cars dates back to the 1920s when The Houdina Radio Control company showcased a radio-controlled vehicle in New York City. In the 1950s, RCA Labs and General Motors demonstrated their ideas for autonomous vehicles that were to be controlled by special circuitry installed below the roads (think streetcars only the rails are underground).

Advances in AI have been particularly important to make driverless cars a reality. Machine learning techniques like convolutional neural networks (CNN), backpropagation (first practically implemented in 1989) and Max Pooling (first introduced in 1992 as the Cresceptron framework) have become building blocks of modern computer vision.

Thanks to all the latest developments in the field of computer vision, cameras — crucial for object detection and recognition — have become important sensors in autonomous vehicles.

From 2004 to 2007 the Defense Advanced Research Projects Agency (DARPA) of the United States of America held three challenges. In these challenges they offered a one million dollar price for any team that could deliver an autonomous vehicle capable of crossing 150 miles through the Mojave desert. In the first challenge no one finished. In the second challenge five vehicles completed the course.

In 2007, Darpa held its third and final challenge in an urban environment — Victorville, California. In this challenge, vehicles needed to drive in traffic and perform a series of maneuvers such as merging, passing and parking. Carnegie Mellon University won the third competition. These challenges and their prizes were a great incentive for researchers and students to work on early-days problems for self-driving cars. In the final challenge, vehicles had to show real-time intelligent decision making based on their reaction to other vehicles on the road.

These days, most car companies offer a certain level of autonomy, such as park assist or collision detection. Furthermore, companies like Waymo and Tesla are pursuing full autonomy. In 2014, Tesla Motors announced their first Autopilot feature and in 2018 Waymo launched an autonomous taxi service called Robotaxi.

Uber also made an attempt at self-driving cars for food delivery and taxi services. However, they sold their driverless car division to a Silicon Valley startup called Aurora toward the end of 2020. Although Uber is working in partnership with Aurora to release a driverless vehicle, they also struck a deal with a joint venture between Hyundai and Aptiv known as Motional, which seems closer to delivering a driverless fleet for Uber.