Quantum computers can measure and observe quantum systems at the molecular level, as well as solve the conditional probability of events. Not to mention they can do billions of years worth of computing over the course of a weekend.

What Is Quantum Computing?

Quantum computing is a process that uses the laws of quantum mechanics to solve problems too large or complex for traditional computers. Quantum computers rely on qubits to run and solve multidimensional quantum algorithms.

Quantum computing is vastly different from classical computing. Quantum physicist Shohini Ghose, of Wilfrid Laurier University, has likened the difference between quantum and classical computing to light bulbs and candles: “The light bulb isn’t just a better candle; it’s something completely different.”

What Is Quantum Computing?

Quantum computing solves mathematical problems and runs quantum models using the tenets of quantum theory. Some of the quantum systems it is used to model include photosynthesis, superconductivity and complex molecular formations.

To understand quantum computing and how it works, it’s necessary to first understand qubits, superposition, entanglement and quantum interference.

What Are Qubits?

Quantum bits, or qubits, are the basic unit of information in quantum computing. Sort of like a traditional binary bit in traditional computing.

Qubits use superposition to be in multiple states at one time. Binary bits can only represent 0 or 1. Qubits can be 0 or 1, as well as any part of 0 and 1 in superposition of both states.

What are qubits made of? The answer depends on the architecture of quantum systems, as some require extremely cold temperatures to function properly. Qubits can be made from trapped ions, photons, artificial or real atoms or quasiparticles, while binary bits are often silicon-based chips.

What Is Superposition?

To explain superposition, some people evoke Schrödinger’s cat, while others point to the moments a coin is in the air during a coin toss. Quantum superposition is a mode when quantum particles are a combination of all possible states. The particles continue to fluctuate and move while the quantum computer measures and observes each particle.

The more interesting fact about superposition is the ability to look at quantum states in multiple ways, and ask it different questions, said John Donohue, scientific outreach manager at the University of Waterloo’s Institute for Quantum Computing. That is, rather than having to perform tasks sequentially, like a traditional computer, quantum computers can run vast numbers of parallel computations.

The top-line takeaway is that superposition lets a quantum computer “try all paths at once.”

What Is Entanglement?

Quantum particles are able to correspond measurements with one another, and when they are engaged in this state, it’s called entanglement. During entanglement, measurements from one qubit can be used to reach conclusions about other units. Entanglement helps quantum computers solve larger problems and calculate bigger stores of data and information.

What Is Quantum Interference?

As qubits experience superposition, they can also naturally experience quantum interference. This interference is the probability of qubits collapsing one way or another. Because of the possibility of interference, quantum computers work to reduce it and ensure accurate results.

How Do Quantum Computers Work?

Qubits and Computational Algorithms

Quantum computers process information in a fundamentally different way than classical computers. Traditional computers operate on binary bits but quantum computers transmit information via qubits. The qubit’s ability to remain in superposition is the heart of quantum’s potential for exponentially greater computational power.

Quantum computers use a variety of algorithms to conduct measurements and observations. After a user inputs these algorithms, the computer creates a multidimensional space where patterns and individual data points are housed. For example, if a user wants to solve a protein folding problem to discover the least amount of energy to use, the quantum computer would measure the combinations of folds; this combination is the answer to the problem.

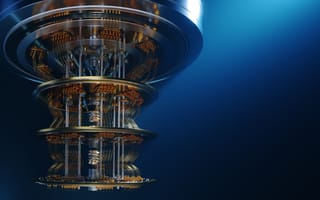

Quantum-Specific Computer Infrastructure

The physical build of a true quantum computer consists mainly of three parts:

- A traditional computer and infrastructure that runs programming and sends instructions to the qubits.

- A method to transfer signals from the computer to the qubits.

- A storage unit for the qubits. It must be able to stabilize the qubits and certain requirements have to be met (which range from needing to be near zero degrees or the housing of a vacuum chamber).

Physical Isolation and Cooling Mechanisms

Any number of simple actions or variables can send error-prone qubits falling into decoherence, or the loss of a quantum state. Things that can cause a quantum computer to crash include measuring qubits and running operations. In other words: using it. Even small vibrations and temperature shifts will cause qubits to decohere.

That’s why quantum computers are kept isolated, and the ones that run on superconducting circuits — the most prominent method, favored by Google and IBM — have to be kept at near-absolute zero (a cool -460 degrees Fahrenheit).

Examples of Quantum Computing Chips

The quantum computing race dates back to the Cold War and still carries political implications today. With companies and institutions jockeying for position, here are some of the top quantum computing chips to come out of this heated competition.

IBM’s Heron and Condor Chips

IBM has long been a leader in the quantum space and remains at the forefront with its latest generation of quantum chips. IBM’s Heron processor demonstrates a much reduced error rate compared to its predecessors, which is why it’s being used to power IBM’s first modular quantum computer. While the IBM Condor processor may not possess the same kind of accuracy as the Heron, its massive size supports quantum computing on a larger scale.

Google’s Willow Chip

Google’s Willow chip manages to reduce its errors by using more qubits — typically, more qubits means more errors. In addition, the chip took only five minutes to complete a computation that would’ve taken the fastest supercomputer 10 septillion years to solve. These achievements lay the foundation for large-scale quantum computing and have even reignited debates around multiverse theory.

Microsoft’s Majorana 1 Chip

Microsoft’s Majorana 1 chip promises a new era in quantum computing, thanks to its use of the first topoconductor — a material that provides greater control over Majorana particles, resulting in more stable qubits. These advancements mean a reliable, large-scale quantum computer capable of handling complex problems could become a reality within years instead of decades.

Amazon’s Ocelot Chip

Just a week after Microsoft’s announcement, Amazon released its first quantum chip, known as Ocelot, in partnership with the California Institute of Technology. The chip uses a technique called bosonic error correction, which involves increasing the energy in a quantum system to reduce errors instead of increasing the number of qubits. This approach could cut the costs of error correction by up to 90 percent, according to some estimates.

USTC’s Zuchongzhi-3 Chip

As a testament to China’s growing influence in the quantum realm, the University of Science and Technology of China (USTC) released its Zuchongzhi-3 chip. The chip excelled at random circuit sampling tasks, finishing a task 15 orders of magnitude faster than the world’s fastest supercomputers. This makes Zuchongzhi-3 one quadrillion times faster than the fastest supercomputers, and one million times faster than Google’s Willow.

Quantum Computing Real-World Applications

Quantum computing can optimize problem-solving by using quantum computers to run quantum-inspired algorithms. With this technology, there will be new discoveries in how to manage air traffic control, package deliveries, energy storage and more.

Climate and Energy Optimization

Quantum computing can help with modeling and researching solvents and absorbents — substances that can aid in carbon capture to reduce carbon emissions and combat climate change. In addition, quantum computing could be used to optimize electrical grids. A pilot program between Iberdrola and Multiverse Computing found some quantum-powered algorithms outperformed their classical counterparts in managing grids’ energy usage.

Investment Banking and Trading

Investment banks could use the broader capacity of quantum computing to simulate various scenarios and test the risks and outcomes of certain market conditions. When it comes to measuring macroconditions, quantum computing could even be used to generate digital twins to represent a bank in different positions under a range of conditions.

Data Encryption

The arrival of quantum computing technology could signal a new era of cybersecurity, where companies must grapple with quantum computers that can easily get past today’s encryption systems. This has further accelerated innovation within the digital security sector as companies like Apple develop post-quantum encryption systems to prepare for the next wave of advanced cyber attacks.

Logistics and Route Efficiency

Because of its ability to complete billions of computations simultaneously, quantum computing can consider different travel routes and quickly determine the fastest route available. Not only does this benefit logistics teams, but it also addresses traffic issues. In 2019, Volkswagen used a quantum computer to calculate the most efficient routes for public transport buses, shortening passengers’ commutes and facilitating better traffic flow.

Drug Development

In a potential turning point for quantum chemistry, researchers from the University of Melbourne leveraged the exascale computing power of the Frontier supercomputer to conduct simulations of biological systems. This will allow teams to model how drugs behave and how patients could react when taking them, resulting in faster and more accurate drug development.

Space Exploration

Quantum computers thrive in sub-zero temperatures, making them the perfect technology to deploy in space. As space exploration ramps up, quantum computers can handle the expansive data sets collected from telescopes and aid in complex tasks like analyzing black holes. Research teams could also use quantum computing to design models for different scenarios and develop forecasts for space trips.

Supply Chain Performance

Media and telecommunication companies can harness the computational power of quantum computers to monitor entire supply chain networks. With this ability, these businesses can make informed decisions on how to organize their supply chains according to data based on geography, sales and other factors. Teams can also monitor their equipment and better execute predictive maintenance with the help of quantum computing.

Molecular Modeling

One quantum computing breakthrough came in 2017, when researchers at IBM modeled beryllium hydride, the largest molecule simulated on a quantum computer to date. Another key step arrived in 2019, when IonQ researchers used quantum computing to simulate a water molecule, helping advance computational chemistry.

Quantum Computing Challenges

Quantum Noise Disruptions

We’re still in what’s known as the Noisy, Intermediate-Scale Quantum (NISQ) era. Quantum noise refers to any disturbances that affect the state of qubits, which can disrupt superposition, entanglement and the overall accuracy of quantum systems. This noise can be caused by factors like temperature, electromagnetic or mechanical fluctuations, making quantum computers incredibly difficult to keep in a proper quantum state. As such, NISQ computers can’t be trusted to make decisions of major commercial consequence and are currently used primarily for research and education.

Scaling Difficulties

While quantum computing has the potential to solve complex problems, its operational output and level of qubits required to actually complete these tasks are demanding, and the technology has yet to scale to be able to support these needs.

With qubits in particular, the challenge is two-fold, according to Jonathan Carter, a scientist at Lawrence Berkeley National Laboratory. First, individual physical qubits need to have better fidelity. That would conceivably happen either through better engineering, discovering optimal circuit layout, and finding the optimal combination of components. Second, we have to arrange them to form logical qubits.

“Estimates range from hundreds to thousands to tens of thousands of physical qubits required to form one fault-tolerant qubit,” Carter said. “I think it’s safe to say that none of the technology we have at the moment could scale out to those levels.”

For example, researchers would need millions of qubits alone to compute “the chemical properties of a novel substance,” theoretical physicist Sabine Hossenfelder noted in the Guardian. Plus, the fragility of large-scale quantum systems makes it difficult for current technologies to properly stabilize them long enough to even function.

Algorithmic Limitations

The challenges that quantum computing faces aren’t strictly hardware-related. The “magic” of quantum computing also resides in algorithmic advances, “not speed,” explained Greg Kuperberg, a mathematician at the University of California at Davis.

“If you come up with a new algorithm, for a question that it fits, things can be exponentially faster,” he said.

Uncertainty Around Quantum Computing Standards

Another open question: Which method of quantum computing will become standard? While superconducting has borne the most fruit so far, researchers are exploring alternative methods that involve trapped ions, quantum annealing or so-called topological qubits. In Donohue’s view, it’s not necessarily a question of which technology is better so much as one of finding the best approach for different applications. For instance, superconducting chips naturally dovetail with the magnetic field technology that underpins neuroimaging.

Lack of Quantum Computing Expertise

One roadblock for quantum computing, according to Rebecca Krauthamer, CEO of quantum computing consultancy Quantum Thought, is a general lack of expertise. “There’s just not enough people working at the software level or at the algorithmic level in the field,” she said. Tech entrepreneur Jack Hidarity’s team set out to count the number of people working in quantum computing and found only about 800 to 850 people, according to Krauthamer. “That’s a bigger problem to focus on, even more than the hardware,” she said. “Because the people will [need to] bring that innovation.”

Why Quantum Computing Is Important

Quantum Computers Can Review Classical Computer Results

Quantum computers’ research and development practicality is demonstrable, if decidedly small-scale. Donohue cites the molecular modeling of lithium hydrogen. That’s a small enough molecule that it can also be simulated using a supercomputer, but the quantum simulation provides an important opportunity to “check our answers” after a classical computer simulation.

These are generally still small problems that can be checked using classical simulation methods. “But it’s building toward things that will be difficult to check without actually building a large particle physics experiment, which can get very expensive,” Donohue said.

Quantum Computing Can Transform Cryptography

The cryptosystem known as RSA provides the safety structure for a host of privacy and communication protocols, from email to internet retail transactions. Current standards rely on the fact that no one has the computing power to test every possible way to de-scramble your data once encrypted, but a mature quantum computer could try every option within a matter of hours. Thankfully, the technology is still a ways away — and the experts are on it.

“What we hear from the academic community and from companies like IBM and Microsoft is that a 2026-to-2030 timeframe is what we typically use from a planning perspective in terms of getting systems ready,” said Mike Brown, CTO and co-founder of quantum-focused cryptography company ISARA Corporation.

Cryptographers from ISARA are among several contingents that have taken part in the Post-Quantum Cryptography Standardization project, a contest of quantum-resistant encryption schemes that was launched in 2016 by the National Institute of Standards and Technology. The aim is to standardize algorithms that can resist attacks levied by large-scale quantum computers.

The level of complexity and stability required of a quantum computer to launch the much-discussed RSA attack is extreme. Even granting that timelines in quantum computing — particularly in terms of scalability — are points of contention.

The Future of Quantum Computing

Quantum computers are being used right now, but they are not presently “solving” climate change, turbocharging financial forecasting probabilities or performing other similarly lofty tasks that get bandied about in reference to quantum computing’s potential.

“The technology just isn’t quite there yet to provide a computational advantage over what could be done with other methods of computation at the moment,” Dohonue said. “Most [commercial] interest is from a long-term perspective. [Companies] are getting used to the technology so that when it does catch up — and that timeline is a subject of fierce debate — they’re ready for it.”

Though quantum computing still has a ways to go before a wide-scale commercial debut, users can operate small-scale quantum processors via the cloud through IBM’s online Q Experience and its open-source software Quiskit. Microsoft and Amazon both now have similar platforms, dubbed Azure Quantum and Amazon Braket. There are also over 60 algorithms listed and over 400 papers cited at Quantum Algorithm Zoo, an online catalog of quantum algorithms compiled by Microsoft quantum researcher Stephen Jordan.

“That’s one of the cool things about quantum computing today,” Krauthamer said. “We can all get on and play with it.”

Frequently Asked Questions

What is quantum computing in simple terms?

Quantum computing refers to computing that operates off of the laws of quantum mechanics in order to solve problems faster than classical computers. Quantum computers use qubits to have information be in multiple states (such as 0 and 1) at once.

What can quantum computers do?

Quantum computers can run quantum algorithms to accelerate problem-solving processes. These processes may be applied to areas in medical research, financial modeling, AI and more to make decisions with increased accuracy and speed.

Do quantum computers exist now?

Quantum computers exist now, though they are mainly used in data centers, laboratories and universities for research and education purposes.

What is the main goal of quantum computing?

Quantum computing aims to speed up research and development initiatives as well as solve complex data or optimization problems that classical computers are unable to process.