Computers are great at working with standardized and structured data like database tables and financial records. They are able to process that data much faster than we humans can. But us humans don’t communicate in “structured data” nor do we speak binary! We communicate using words, a form of unstructured data.

Unfortunately, computers suck at working with unstructured data because there’s no standardized techniques to process it. When we program computers using something like C++, Java, or Python, we are essentially giving the computer a set of rules that it should operate by. With unstructured data, these rules are quite abstract and challenging to define concretely.

Human vs Computer understanding of language

Humans have been writing things down for thousands of years. Over that time, our brain has gained a tremendous amount of experience in understanding natural language. When we read something written on a piece of paper or in a blog post on the internet, we understand what that thing really means in the real-world. We feel the emotions that reading that thing elicits and we often visualize how that thing would look in real life.

Natural language processing (NLP) is a sub-field of artificial intelligence that is focused on enabling computers to understand and process human languages, to get computers closer to a human-level understanding of language. Computers don’t yet have the same intuitive understanding of natural language that humans do. They can’t really understand what the language is really trying to say. In a nutshell, a computer can’t read between the lines.

That being said, recent advances in machine learning (ML) have enabled computers to do quite a lot of useful things with natural language! Deep learning has enabled us to write programs to perform things like language translation, semantic understanding, and text summarization. All of these things add real-world value, making it easy for you to understand and perform computations on large blocks of text without the manual effort.

Let’s start with a quick primer on how NLP works conceptually. Afterwards we’ll dive into some Python code so you can get started with NLP yourself!

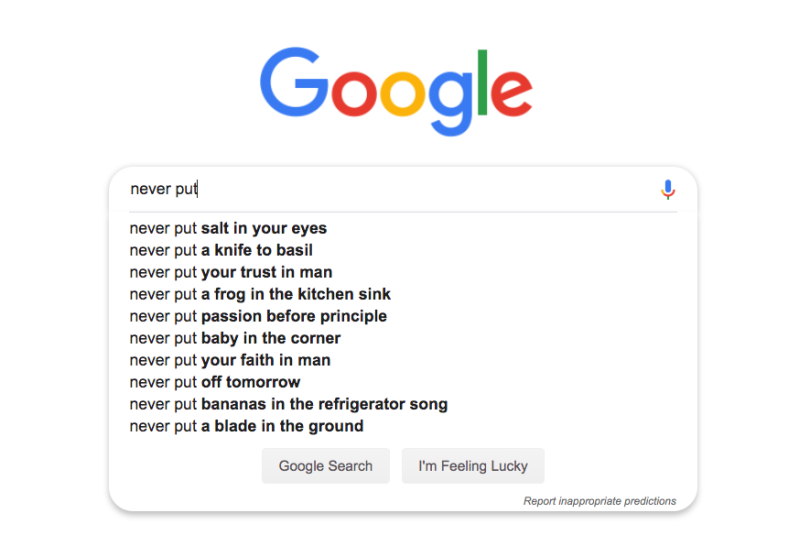

The real reason why NLP is hard

The process of reading and understanding language is far more complex than it seems at first glance. There are many things that go in to truly understanding what a piece of text means in the real-world. For example, what do you think the following piece of text means?

“Steph Curry was on fire last night. He totally destroyed the other team”

To a human it’s probably quite obvious what this sentence means. We know Steph Curry is a basketball player; or even if you don’t we know that he plays on some kind of team, probably a sports team. When we see “on fire” and “destroyed” we know that it means Steph Curry played really well last night and beat the other team.

Computers tend to take things a bit too literally. Viewing things literally like a computer, we would see “Steph Curry” and based on the capitalization assume it’s a person, place, or otherwise important thing which is great! But then we see that Steph Curry “was on fire”…. A computer might tell you that someone literally lit Steph Curry on fire yesterday! … yikes. After that, the computer might say that Mr. Curry has physically destroyed the other team…. they no longer exist according to this computer… great…

But not all is grim! Thanks to Machine Learning we can actually do some really clever things to quickly extract and understand information from natural language! Let’s see how we can do that in a few lines of code with a couple of simple Python libraries.

Doing NLP — with Python code

For our walk through of how an NLP pipeline works, we’re going to use the following piece of text from Wikipedia as our running example:

Amazon.com, Inc., doing business as Amazon, is an American electronic commerce and cloud computing company based in Seattle, Washington, that was founded by Jeff Bezos on July 5, 1994. The tech giant is the largest Internet retailer in the world as measured by revenue and market capitalization, and second largest after Alibaba Group in terms of total sales. The amazon.com website started as an online bookstore and later diversified to sell video downloads/streaming, MP3 downloads/streaming, audiobook downloads/streaming, software, video games, electronics, apparel, furniture, food, toys, and jewelry. The company also produces consumer electronics — Kindle e-readers, Fire tablets, Fire TV, and Echo — and is the world’s largest provider of cloud infrastructure services (IaaS and PaaS). Amazon also sells certain low-end products under its in-house brand AmazonBasics.

A few dependencies

First we’ll install a few useful Python NLP libraries that will aid us in analysing this text:

### Installing spaCy, general Python NLP lib pip3 install spacy

### Downloading the English dictionary model for spaCy python3 -m spacy download en_core_web_lg

### Installing textacy, basically a useful add-on to spaCy pip3 install textacyEntity Analysis

Now that everything is installed, we can do a quick entity analysis of our text. Entity analysis will go through your text and identify all of the important words or “entities” in the text. When we say “important” what we really mean is words that have some kind of real-world semantic meaning or significance.

Check out the code below which does all of the entity analysis for us:

# coding: utf-8

import spacy

### Load spaCy's English NLP model

nlp = spacy.load('en_core_web_lg')

### The text we want to examine

text = "Amazon.com, Inc., doing business as Amazon, is an American electronic commerce and cloud computing company based in Seattle, Washington, that was founded by Jeff Bezos on July 5, 1994. The tech giant is the largest Internet retailer in the world as measured by revenue and market capitalization, and second largest after Alibaba Group in terms of total sales. The amazon.com website started as an online bookstore and later diversified to sell video downloads/streaming, MP3 downloads/streaming, audiobook downloads/streaming, software, video games, electronics, apparel, furniture, food, toys, and jewelry. The company also produces consumer electronics - Kindle e-readers, Fire tablets, Fire TV, and Echo - and is the world's largest provider of cloud infrastructure services (IaaS and PaaS). Amazon also sells certain low-end products under its in-house brand AmazonBasics."

# Replace a specific entity with the word "PRIVATE"

def replace_entity_with_placeholder(token):

if token.ent_iob != 0 and token.ent_type_ in ("PERSON", "ORG"):

return "[PRIVATE]"

else:

return token.text

# Loop through all the entities in a piece of text and apply entity replacement

def scrub(text):

doc = nlp(text)

replaced_tokens = [replace_entity_with_placeholder(token) for token in doc]

return " ".join(replaced_tokens)

print(scrub(text))

We first load spaCy’s learned ML model and initialise the text want to process. We run the ML model on our text to extract the entities. When you run that code you’ll get the following output:

Amazon.com, Inc. ORG

Amazon ORG

American NORP

Seattle GPE

Washington GPE

Jeff Bezos PERSON

July 5, 1994 DATE

second ORDINAL

Alibaba Group ORG

amazon.com ORG

Fire TV ORG

Echo - LOC

PaaS ORG

Amazon ORG

AmazonBasics ORG

The 3 letter codes beside the text are labels which indicate the type of entity we are looking at. Looks like our model did a pretty good job! Jeff Bezos is indeed a person, the date is identified correctly, Amazon is an organization, and both Seattle and Washington are Geopolitical entities (i.e countries, cities, states, etc). The only tricky ones it got wrong were that things like Fire TV and Echo are actually products, not organizations. It also missed out on the other things that Amazon sells “video downloads/streaming, MP3 downloads/streaming, audiobook downloads/streaming, software, video games, electronics, apparel, furniture, food, toys, and jewelry,” probably because they were in a big, uncapitalized list and thus looked fairly unimportant.

Overall our model has accomplished what we wanted to. Imagine we had a huge document full of hundreds of pages of text. This NLP model could quickly get you an overview of what the document is about and what the key entities in it are.

Operating on entities

Let’s try and do something a bit more applicable. Let’s say you have the same block of text as above, but you would like to remove the names of all people and organizations automatically, for privacy concerns. The spaCy library has a very useful scrub function which we can use to scrub away any entity categories we don’t want to see. Here’s what that would look like:

# coding: utf-8

import spacy

### Load spaCy's English NLP model

nlp = spacy.load('en_core_web_lg')

### The text we want to examine

text = "Amazon.com, Inc., doing business as Amazon, is an American electronic commerce and cloud computing company based in Seattle, Washington, that was founded by Jeff Bezos on July 5, 1994. The tech giant is the largest Internet retailer in the world as measured by revenue and market capitalization, and second largest after Alibaba Group in terms of total sales. The amazon.com website started as an online bookstore and later diversified to sell video downloads/streaming, MP3 downloads/streaming, audiobook downloads/streaming, software, video games, electronics, apparel, furniture, food, toys, and jewelry. The company also produces consumer electronics - Kindle e-readers, Fire tablets, Fire TV, and Echo - and is the world's largest provider of cloud infrastructure services (IaaS and PaaS). Amazon also sells certain low-end products under its in-house brand AmazonBasics."

### Replace a specific entity with the word "PRIVATE"

def replace_entity_with_placeholder(token):

if token.ent_iob != 0 and (token.ent_type_ == "PERSON" or token.ent_type_ == "ORG"):

return "[PRIVATE] "

else:

return token.string

### Loop through all the entities in a piece of text and apply entity replacement

def scrub(text):

doc = nlp(text)

for ent in doc.ents:

ent.merge()

tokens = map(replace_entity_with_placeholder, doc)

return "".join(tokens)

print(scrub(text))[PRIVATE] , doing business as [PRIVATE] , is an American electronic commerce and cloud computing company based in Seattle, Washington, that was founded by [PRIVATE] on July 5, 1994. The tech giant is the largest Internet retailer in the world as measured by revenue and market capitalization, and second largest after [PRIVATE] in terms of total sales. The [PRIVATE] website started as an online bookstore and later diversified to sell video downloads/streaming, MP3 downloads/streaming, audiobook downloads/streaming, software, video games, electronics, apparel, furniture, food, toys, and jewelry. The company also produces consumer electronics - Kindle e-readers, Fire tablets, [PRIVATE] , and Echo - and is the world's largest provider of cloud infrastructure services (IaaS and [PRIVATE] ). [PRIVATE] also sells certain low-end products under its in-house brand [PRIVATE] .

That worked great! This is actually an incredibly powerful technique. People use the ctrl + f function on their computer all the time to find and replace words in their document. But with NLP, we can find and replace specific entities, taking into account their semantic meaning and not just their raw text.

Extracting information from text

The library the we installed previously textacy implements several common NLP information extraction algorithms on top of spaCy. It’ll let us do a few more advanced things than the simple out of the box stuff.

One of the algorithms it implements is called Semi-structured Statement Extraction. This algorithm essentially parses some of the information that spaCy’s NLP model was able to extract and based on that we can grab some more specific information about certain entities! In a nutshell, we can extract certain “facts” about the entity of our choice.

Let’s see what that looks like in code. For this one, we’re going to take the entire summary of Washington D.C’s Wikipedia page.

# coding: utf-8

import spacy

import textacy.extract

### Load spaCy's English NLP model

nlp = spacy.load('en_core_web_lg')

### The text we want to examine

text = """Washington, D.C., formally the District of Columbia and commonly referred to as Washington or D.C., is the capital of the United States of America.[4] Founded after the American Revolution as the seat of government of the newly independent country, Washington was named after George Washington, first President of the United States and Founding Father.[5] Washington is the principal city of the Washington metropolitan area, which has a population of 6,131,977.[6] As the seat of the United States federal government and several international organizations, the city is an important world political capital.[7] Washington is one of the most visited cities in the world, with more than 20 million annual tourists.[8][9]

The signing of the Residence Act on July 16, 1790, approved the creation of a capital district located along the Potomac River on the country's East Coast. The U.S. Constitution provided for a federal district under the exclusive jurisdiction of the Congress and the District is therefore not a part of any state. The states of Maryland and Virginia each donated land to form the federal district, which included the pre-existing settlements of Georgetown and Alexandria. Named in honor of President George Washington, the City of Washington was founded in 1791 to serve as the new national capital. In 1846, Congress returned the land originally ceded by Virginia; in 1871, it created a single municipal government for the remaining portion of the District.

Washington had an estimated population of 693,972 as of July 2017, making it the 20th largest American city by population. Commuters from the surrounding Maryland and Virginia suburbs raise the city's daytime population to more than one million during the workweek. The Washington metropolitan area, of which the District is the principal city, has a population of over 6 million, the sixth-largest metropolitan statistical area in the country.

All three branches of the U.S. federal government are centered in the District: U.S. Congress (legislative), President (executive), and the U.S. Supreme Court (judicial). Washington is home to many national monuments and museums, which are primarily situated on or around the National Mall. The city hosts 177 foreign embassies as well as the headquarters of many international organizations, trade unions, non-profit, lobbying groups, and professional associations, including the Organization of American States, AARP, the National Geographic Society, the Human Rights Campaign, the International Finance Corporation, and the American Red Cross.

A locally elected mayor and a 13‑member council have governed the District since 1973. However, Congress maintains supreme authority over the city and may overturn local laws. D.C. residents elect a non-voting, at-large congressional delegate to the House of Representatives, but the District has no representation in the Senate. The District receives three electoral votes in presidential elections as permitted by the Twenty-third Amendment to the United States Constitution, ratified in 1961."""

### Parse the text with spaCy

### Our 'document' variable now contains a parsed version of text.

document = nlp(text)

### Extracting semi-structured statements

statements = textacy.extract.semistructured_statements(document, "Washington")

print("**** Information from Washington's Wikipedia page ****")

count = 1

for statement in statements:

subject, verb, fact = statement

print(str(count) + " - Statement: ", statement)

print(str(count) + " - Fact: ", fact)

count += 1**** Information from Washington's Wikipedia page **** 1 - Statement: (Washington, is, the capital of the United States of America.[4) 1 - Fact: the capital of the United States of America.[4 2 - Statement: (Washington, is, the principal city of the Washington metropolitan area, which has a population of 6,131,977.[6) 2 - Fact: the principal city of the Washington metropolitan area, which has a population of 6,131,977.[6 3 - Statement: (Washington, is, home to many national monuments and museums, which are primarily situated on or around the National Mall) 3 - Fact: home to many national monuments and museums, which are primarily situated on or around the National Mall

Our NLP model found 3 useful facts about Washington D.C from that text:

(1) Washington is the capital of the USA

(2) Washington’s population and the fact that it is metropolitan

(3) Many national monuments and museums

The best part about this is that those are all really the most important pieces of information within that block of text!

Going deeper with NLP

This concludes our easy introduction to NLP! We’ve learned a ton, but this was only a small taste…

There are many more great applications of NLP out there like language translation, chat bots, and more specific and intricate analyses of text documents. Much of this today is done using deep learning, particularly transformer-based models like BERT and GPT, which have largely replaced earlier architectures like RNNs and LSTMs.

If you’d like to play around with more NLP yourself, looking through the spaCy docs and textacy docs, is a great place to start! You’ll see lots of examples of the ways you can work with parsed text and extraction very useful information from it. Everything with spaCy is quick and easy and you can get some really great value out of it. Once you've got that down, it’s time to do bigger and better things with deep learning!

Frequently Asked Questions

What is natural language processing (NLP)?

NLP is a branch of artificial intelligence focused on enabling computers to understand, interpret and process human language.

Why is NLP challenging for computers?

Human language is unstructured, filled with idioms, ambiguity, and context-dependent meanings, making it difficult for computers to interpret without advanced models.

What are some real-world applications of NLP?

NLP powers tasks like language translation, semantic understanding, text summarization, and information extraction from large documents.

Which Python libraries are useful for NLP?

spaCy and textacy are two libraries highlighted for performing tasks like entity recognition and semi-structured statement extraction.