Carmera’s engineers want real-time updates to the maps they’re building for autonomous vehicles. They also want to map every road in the world. Unfortunately, doing both isn’t possible.

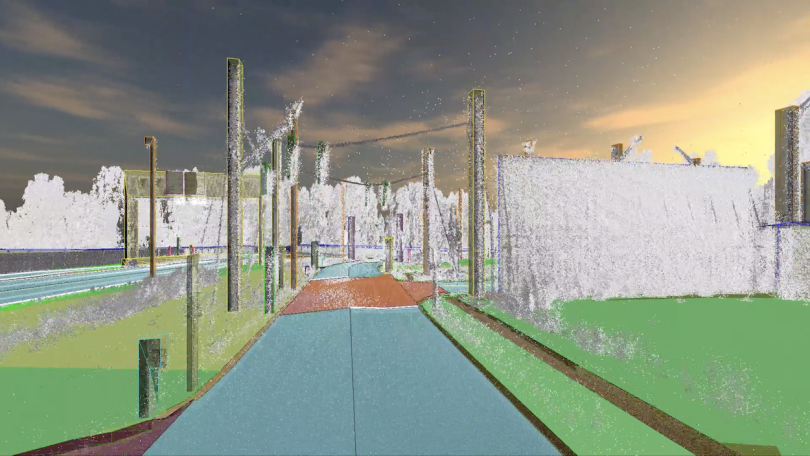

Carmera builds its maps using LiDAR, a sensing method that measures distances using pulsating lasers, as well as RGB color scans, GPS data and inertial measurements. But those high-precision instruments only accomplish half the job. The maps still need to be maintained, and this startup found a distinct way to do it: sticking mobile phones onto vehicle fleets and letting them collect video data as they travel around.

Voila: crowdsourced maps.

But all that data has to be processed through edge or cloud computing. For a handful of cities, it’s doable. For the entire earth, it gets a little pricey.

“It would take hundreds of millions of dollars to process once,” said Ethan Sorrelgreen, Carmera’s chief product officer. “And you’d have to process it every day.”

As Carmera works to usher in the adoption of autonomous vehicles, Sorrelgreen’s team performs an ongoing balancing act of speed, scale and cost.

How Carmera optimizes its data management — and spending

Autonomous vehicles rely on a combination of cameras and sensors to navigate. Adding a base map, like the ones Carmera generates, helps a vehicle better understand its location, anticipate the road ahead and plan its course.

A vehicle driving behind a large truck, for example, may not be able to ‘see’ an approaching stoplight. By referencing a base map, it could still know the stoplight is there. Base maps could also make autonomous vehicles less vulnerable to bad actors who try to trick the car’s cameras.

Both functions are a draw for companies working on self-driving technology. Carmera’s customers include Voyage, a driverless ride-hailing service operating in retirement communities, and Toyota.

Signing a large original equipment manufacturer like Toyota was a big win for a startup like Carmera. But working with OEMs came with problems as well. Specifically: The cost of processing an outrageous amount of data.

“Analysis paralysis kills you when you’re a startup.”

“There’s this general attitude that cloud processing is free, and you basically can process as much data as you want to,” Sorrelgreen said. “When we were working with smaller mobility-as-a-service companies, this wasn’t as much of an issue. But when we started working with major automakers and looking at continent-scale or world-scale maps, our existing paradigm for processing data needed to change.”

Sorrelgreen, Carmera CTO Justin Day and a couple of senior engineers sat down in a room to decide what to do. Their goal, he said, was to walk out with a potential solution as quickly as possible.

“Analysis paralysis kills you when you’re a startup,” Sorrelgreen said. “Implement something and then make it better, as opposed to trying to come up with the perfect idea up front.”

Two weeks later, they had a plan. Within a quarter, they were using three critical questions to help build the most accurate maps possible at the lowest cost.

Question one: Has somebody already built this?

At first, the engineers at Carmera tried to build their own hardware to mount on fleets and process video data. But after six months of product development, they made an important discovery.

“We realized we were just building a mobile phone,” Sorrelgreen said.

With that realization, they shifted gears and started looking for the best cellphone for their purposes. A more advanced model would make data processing easier, but it would also drive the cost up. They decided to save money on the hardware side.

“We have to get really, really smart about how we break the problem down, because we can’t just throw the latest and greatest technology at it.”

“We don’t use the latest Galaxy mobile phone to do data processing,” Sorrelgreen said. “We’re using five-year-old, little Motorolas that are very, very old in terms of today’s technology, and that means we’re using processors that are nowhere near as fast as what you can get.”

Given that limitation, the team is continually experimenting with how best to allocate the phones’ processing power. In other words, how robust can their modeling get before it requires an expensive hardware upgrade?

“We try to keep the phones super simple so we can deploy them on more cars,” Carmera senior engineer Alana Ohno said. “But we’ve played around with attaching more machine learning onto the phone.”

Question two: How can we get more from less?

Not all the data the company’s cameras collect is worthwhile to process, and building a reliable computer vision model on an oldfangled mobile phone is no easy task.

“We have to get really, really smart about how we break the problem down, because we can’t just throw the latest and greatest technology at it,” Sorrelgreen said.

The model, for instance, must be able to identify pertinent objects, like orange traffic cones that indicate road work. But running an object classifier algorithm in real time on the phones would be impossible. So the team created a few layers of algorithms to help determine what gets processed on the phone itself and what gets sent to the cloud for further analysis.

For example, if a phone has four cores, one may process a thread of key frames in the video data, hunting for the color orange. Once it finds the color, it sends that frame to a second core, which runs a rudimentary object classifier. If the algorithm determines that video data may contain objects of value — like traffic cones — the data is uploaded to the cloud.

Other threads look through video for diamond-shaped road signs or color combinations that could be traffic lights. But these naive classifiers — even the one that hunts for the color orange — don’t always get it right.

“Once it found us a Tropicana orange juice delivery truck,” Sorrelgreen said. “There was a lot of orange, but they were actual oranges.”

That, however, isn’t a bad thing. Carmera’s developers optimized the algorithms for false positives. A fresh map is a safe map, Sorrelgreen said, and reviewing too many details is far better than missing an important one — especially when your clients are major automakers.

The cloud processing system in charge of reviewing those details is known at Carmera as “the cortex,” and aptly so. It acts like a brain, sifting through sensory data from the mobile phones and determining what’s important enough to receive further review, and what that review should look like. If video from one phone indicates a roadblock, the cortex may request a few additional frames from other vehicles in the same area. And sending single frames, instead of more video, saves processing power.

“With machine learning models, you can never really test with 100 percent accuracy.”

Like any other brain, the cortex uses a variety of variables to make its decisions. Data from a rarely traveled road carries more weight than that from a busy city street, for instance, so the system collects observations each time a car passes through a less-traversed area.

An observation’s potential impact on traffic plays a role as well. If a car-camera picks up a concrete barrier, that obstruction is likely to be there for a while, and the cortex knows it doesn’t need to collect data on that area again right away. Traffic cones, however, could be gone in a day, so the system will check in more frequently.

Lastly, the system prioritizes detection over clearance. That means discovering new impactful events is more important than noticing when old ones are cleared away.

Carmera built the cortex to be intelligent. Nonetheless, it still needs some help from human brains. Before new data is integrated into the company’s maps, it gets passed to an operations team member for final review.

“The work the human does is meant to be very, very simple, but it’s also really important because, with machine learning models, you can never really test with 100 percent accuracy,” Ohno said.

Question three: How will we keep improving after deployment?

As the Carmera team selected candidates for algorithmic review — or details of value to map users — some were common sense. The color orange, for example, is a good thing to watch for while you’re driving. Others, however, came from customer feedback.

“It’s not that the customer is always right, but the customer always has more information.”

Some companies, Ohno said, want to avoid roads with construction or police activity altogether because their sensors aren’t equipped to navigate those obstructions. Others want their maps to display every relevant detail, down to metal manhole covers.

“It’s not that the customer is always right, but the customer always has more information, and you should feed that into your development,” Sorrelgreen said. “Whether they’re right or not about whether you should be implementing something, they’re close to the problem and know what their needs are.”

Going forward, Carmera will continue to tweak its algorithms daily, he said. Each new customer will come with its own needs and constraints, starting the product team’s three-pronged approach back at step one.

It’s a challenge, but Sorrelgreen isn’t too worried. The company is preparing for demand at a global scale, he said, because Carmera’s maps give self-driving vehicles an important safety feature that sensors do not: wisdom.

“In order to give an autonomous vehicle a sort of intuition, you need to give it this rich, very detailed information from which to make those inferences.”